Cloud Computing, HPC and Manufacturing: A Conversation with Bill Feiereisen

These days, Bill Feiereisen, madcap bicycling enthusiast, DM-Report contributing editor and Senior Scientist and Corporate Strategist in High Performance Computing (HPC) at Intel, has his head in the clouds.

We got him to park his racing bike long enough to gather some of his thoughts on the direction that HPC is taking as it encounters the rapidly growing, diverse and changing discipline known as cloud computing. With, of course, an emphasis on manufacturing.

We got him to park his racing bike long enough to gather some of his thoughts on the direction that HPC is taking as it encounters the rapidly growing, diverse and changing discipline known as cloud computing. With, of course, an emphasis on manufacturing.

“HPC is undergoing commoditization at all levels – hardware, software and applications,” Feiereisen says. “As a result, people are beginning to look at the possibility of accessing HPC using commodity delivery mechanisms. Rather than the traditional approach of obtaining cycles from the datacenter, researchers, analysts and engineers are investigating on-demand computing based in the cloud. It’s not just that people are beginning to do more work with commercial cloud providers like Amazon or Google; they are physically changing the way they access applications and responding to new ways that applications are being presented.”

Feiereisen comments that back in the not so distant “old days” of HPC computing at the big labs or universities, you would make your request for time, get an ID and password, and login using SSH. What you immediately saw was a command line prompt – and from that point on, you were on your own.

This is pretty much still the case today. The big labs have been servicing a user base that is quite sophisticated and are just fine with a command line prompt. There have been some efforts over the years to add more features into the prompt itself, but when it comes to running the application, you had better be well versed in interacting with a multicore system and the world of parallel programming.

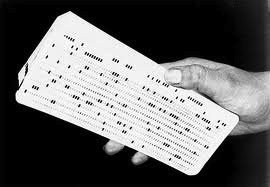

Just how long has this been going on? At least 40 years. Recalls Feiereisen, “I started scientific programming in the early 1970s – we were working with punch cards and programming in FORTRAN running on a DC 6024 Datacraft computer. The first few cards you punched for a job were a call for JCL – job control language. Even when we made the big leap forward to using keyboards, you were still submitting JCL calls to a command line to get underway. Well, even last year, if you were to log in to the supercomputers at Sandia or Los Alamos, you would find that not much has changed – you are still expected to submit your job through the command line.”

Just how long has this been going on? At least 40 years. Recalls Feiereisen, “I started scientific programming in the early 1970s – we were working with punch cards and programming in FORTRAN running on a DC 6024 Datacraft computer. The first few cards you punched for a job were a call for JCL – job control language. Even when we made the big leap forward to using keyboards, you were still submitting JCL calls to a command line to get underway. Well, even last year, if you were to log in to the supercomputers at Sandia or Los Alamos, you would find that not much has changed – you are still expected to submit your job through the command line.”

These days you can get some expert help with workflow and job scheduling using software such as Platform Computing LSF, Adaptive’s Moab, or the open source SLURM. But with the cloud, your options and the business model in general is changing. HPC is becoming more accessible.

Goodby Command Line

“If you want to, you can still rent a virtual image in the cloud and work off the command line,” he says. “But today there are a bunch of enterprising people who are making a business out of layering software on top of the command line and packaging up the whole virtual machine image – the representation of the hardware, software and applications – and putting it on a disc somewhere so you as a customer can easily call up the entire package. In many cases the interface is through a web browser or the actual GUI that a manufacturing user would employ if running jobs on Ansys or CD-adapco software.

“It’s an interesting business model,” Feiereisen continues. “If you or I went straight to Amazon and pulled one of their plain vanilla machines off the shelf to run using the command line, the cheapest instance we could get would probably cost around eight to 10 cents a core hour. But there are companies starting up that will layer software on top of these machine images and resell the whole package to you at maybe a buck or two per hour. Amazon gets their take for providing the infrastructure and the independent company gets its share by managing your licenses and providing technical support.

“When you have completed your job, you can save the machine image to a disc and you’re done. You eliminate any of the costs typically associated with the continued care and feeding of an in-house HPC system, its infrastructure, and IT personnel. In short, you have access to a complete HPC problem solving system that addresses specifically what you want to do for a per usage fee. This should be particularly attractive to small to medium sized manufacturers – the missing middle – who know they need digital manufacturing capabilities, but who have neither the money, time or talent to launch their own HPC system. ”

Public Cloud Players

For Feiereisen, two of the most interesting players in the public cloud space are Google with its Compute Engine and Amazon EC2. He notes that while the Google Compute Engine was announced last year on June 27, Amazon EC2 has been around for five years or so. Over the years Amazon has continually upgraded the underlying infrastructure and added new services.

“People knock Amazon EC2 as just being a collection of cheap processors – it’s not really a HPC system,” he says. “But Amazon has bee listening to their customers and have made a lot of changes behind the scenes. Now, if you ask for an HPC instance, they have the smarts to direct you to a properly interconnected set of high performance processors that you can call up. You pay by the hour and don’t have to maintain the infrastructure – a perfect solution for the missing middle.

“I foresee a constellation of Amazon EC2, Google Compute Engine, and the application providers layered together with application integrators – companies that know how to run these applications in the cloud,” Feiereisen adds. “They will make a business of providing these smaller companies with usable machine instances. Imagine a small auto parts supplier in Detroit that is trying to design a particular component but doesn’t have the expertise or capital to maintain its own HPC system. They could grab one of these instances off the shelf and get the job done for their OEM. When the job is delivered to, say, Ford or GM, the supplier retires all the instances and has no capital investment just sitting around.

“Overall, it’s all about providing access to HPC as a utility, as a commodity,” concludes Feiereisen. It’s like an appliance in the home connected to a plug in the wall. When you’re done you switch it off and no longer pay for its operation. Apply the same principle to HPC in the cloud and it’s a game changer.”