AMD, Applied Micro Take Fabric Weaving 101 For ARM Server Chips

AMD and Applied Micro, the two biggest and most vocal of the remaining ARM server chip makers now that upstart Calxeda has shut down, have taken up weaving. Both companies are integrating network and fabric interfaces into their forthcoming 64-bit ARM chips aimed at servers, and they provided a little more detail about their on-chip fabric plans at the Open Compute Summit in San Jose this week.

There are two competing forces at work in the datacenter these days. One is increasing integration of components onto server processors and the other is the disaggregation of components in the server and the rest of the rack. The former allows for the ever-increasing transistor budgets enabled by Moore's Law to be put to good use, while the other allows for different components of the server rack – and someday the server itself – to be upgraded individually and on their own schedules.

There is considerable debate about how far the on-chip integration should be taken. Some chip makers think that Ethernet interfaces are enough, while others want to bring switches or chunks of fabrics onto the die. For instance, Calxeda's secret sauce in its ECX-1000 and ECX-2000 chips was the integration of a distributed Layer 2 switch right on the die next to the ARM cores, which then fed into multiple ports that could be configured as Ethernet ports running at various speeds. By adding this distributed switch, Calxeda was, in theory, able to scale to 4,096 nodes in a single cluster without the need of a single top-of-rack switch, and the plan for future chips in the 2015 timeframe, before the venture money ran out, was to push that scalability to up over 100,000 nodes.

Neither AMD nor Applied Micro have voiced such ambitious plans for their on-chip networking. In fact, AMD has quietly tweaked its plan for the "Seattle" ARM server chips. Applied Micro is only now beginning to give some details about its X-Weave fabric, which it has been vague about in its discussions about its X-Gene ARM server chip.

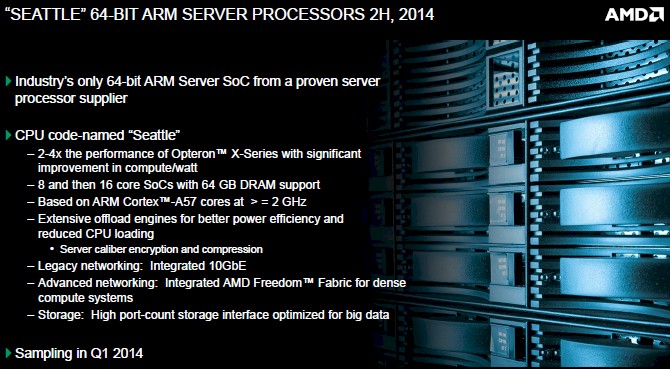

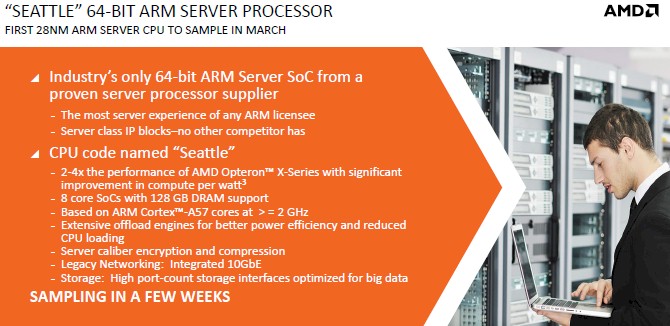

You have to look carefully at the Seattle ARM server chip presentations from this year and last year to notice the change, and this is, of course, precisely the kind of thing that EnterpriseTech does. Here are the feeds and speeds from the Seattle chip that AMD provided last June, when it first started talking about its plans:

And here are the specs that AMD shared about the chip at the Open Compute Summit this week:

If you look closely, you will see that the on-chip support for the Freedom fabric that is at the heart of the SeaMicro server line from AMD is no longer on the Seattle chip. The chip will have integrated 10 Gb/sec Ethernet ports, as was planned.

"AMD has made significant investments in continued enhancements to the Freedom Fabric architecture," John Williams, vice president of marketing at AMD, tells EnterpriseTech in explaining the change. "Because of the development timeline requirements of the 'Seattle' processor, the capabilities offered by the next generation discrete fabric ASIC will be significantly more advanced and much better suited to the A-Series processors. AMD's customers have guided us to pair the first generation of the AMD Opteron A-Series processors with the next generation discrete Freedom Fabric ASIC solution and then to enable cost optimizations with an integrated solution in future generation A-Series processors. We decided to forgo integration of the Freedom Fabric, but we will include it in our next generation."

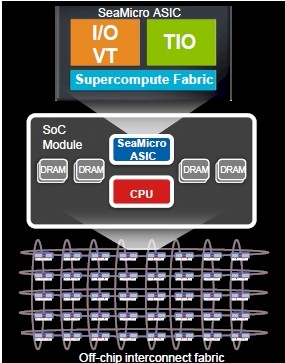

So the Seattle chips, which will be commercialized as the Opteron A1100, will be able to plug into the SeaMicro chassis, but they will do so using the existing first generation Freedom fabric, which implements a 3D torus interconnect between 64 processor cards inside the SeaMicro chassis. This first generation fabric was implemented on a special ASIC that resides in the backplane of the SeaMicro server, and it performs many different functions. The server nodes send their signals back and forth to the Freedom ASIC through PCI-Express mechanicals, which is a very clever way of doing it and one that other microserver makers are using after SeaMicro pioneered this approach.

So the Seattle chips, which will be commercialized as the Opteron A1100, will be able to plug into the SeaMicro chassis, but they will do so using the existing first generation Freedom fabric, which implements a 3D torus interconnect between 64 processor cards inside the SeaMicro chassis. This first generation fabric was implemented on a special ASIC that resides in the backplane of the SeaMicro server, and it performs many different functions. The server nodes send their signals back and forth to the Freedom ASIC through PCI-Express mechanicals, which is a very clever way of doing it and one that other microserver makers are using after SeaMicro pioneered this approach.

The first generation of the Freedom fabric has an aggregate bandwidth of 1.28 Tb/sec, which is about as much as a good top-of-rack switch these days. In addition to linking the server nodes to each other, the Freedom ASIC can drive up to 16 Ethernet ports running at 10 GB/sec or 64 ports running at 1 Gb/sec speeds to the outside world. This ASIC also virtualizes all I/O in the system, which means you can dynamically reallocate resources for each node in the SeaMicro box on the fly. The ASIC incorporates a field programmable gate array that load balances workloads across the chassis and has features that allow for pools of CPU, memory, disk, and fabric bandwidth to be pooled together and give their own distinct quality of service level.

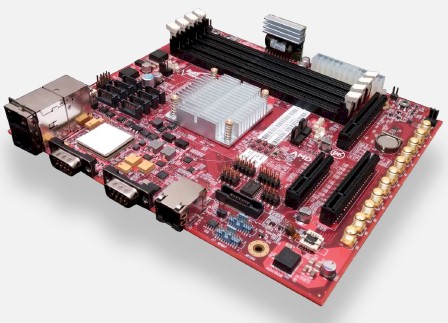

The SeaMicro server cards can hold multiple processors and dedicated memory for them. The current Xeon and Opteron cards have one processor per card, but the Atom-based cards (which AMD doesn't sell very many of any more) had four or six processors per card, depending on the generation. Given the size of the Seattle chip, which you can see on this Opteron A-Series development kit below, it will be tough to get two of these on a single CPU/memory card.

AMD has not said much about what its next generation of Freedom interconnect will look like, but Andrew Feldman, who is in charge of the Data Center Server Solutions business unit as well as the Server CPU business unit at AMD, has conceded in past conversations that one of the things that would be useful is to expand the fabric beyond one chassis so that multiple enclosures could share data and work seamlessly.

This is more or less the approach that Calxeda took with its Fleet Services fabric. But perhaps the "Aries" XC interconnect developed by Cray and now controlled by Intel offers some better inspiration, since a butterfly network as implemented by Aries has loads of bi-section bandwidth and is easier to configure and reconfigure as nodes are added or taken out of a cluster than a torus network.

There are other details with the AMD Opteron A1100 ARM server chip worth noting. The chip is based on the 64-bit Cortex-A57 core from ARM Holdings, and it will come with either four or eight cores. The chip will have up to 4 MB of L2 cache and up to 8 MB of L3 cache that is shared across those ARM cores, and will support both current DDR3 and future DDR4 memory running at speeds up to 1.87 GHz. The chip supports four memory sockets, and the interesting bit is that maximum memory on the tweaked Seattle design has been doubled up to 128 GB. The chip will have eight lanes of I/O coming out of its PCI-Express 3.0 controller, dual 10 GB/sec Ethernet ports, and eight SATA3 ports for peripherals. This will allow a one-core per disk ratio that is the sweet spot for workloads such as Hadoop data analytics.

Feldman said at the Open Compute Summit this week the chip would be sampling in March, and he showed off a working version of a server node that plugs into Facebook's Group Hug microserver chassis, which you can see here:

Presumably AMD will be delivering support for the Seattle chip inside of its of SeaMicro chassis at the same time as it supports the Facebook systems, and it is likely that AMD will also get the Seattle chip into a cartridge for Hewlett-Packard's Moonshot microserver chassis. By the way, just because the Facebook Group Hug system has an ARM server card, no matter who it is from, does not mean that Facebook is necessarily planning to deploy ARM processors in production. Intel's "Avoton" Atom C2000 chip will also be available on a Group Hug card and on the Moonshot cartridges, as will other processors.

Over at Applied Micro, which was the first licensee of the 64-bit ARMv8 architecture and which has been working to be the first to market with a 64-bit ARM server chip, CEO Paramesh Gopi took the stage at Open Compute to show off a version of the Group Hug microserver card and a Moonshot cartridge that was also based on its X-Gene 1 processor. The X-Gene 1 chip has eight cores, just like the AMD Seattle processor and Intel's Avoton Atom chips, has started sampling to customers. He added that the next generation X-Gene 2, which will be implemented in advanced FinFET processes from Taiwan Semiconductor Manufacturing Corp and which will cram 16 ARM cores plus accelerators onto the die, will start sampling in the spring. By the end of the year, Gopi said, Applied Micro will have two 64-bit ARM chips in the field.

The interesting distinction between these two chips, aside from their networking options, is the fact that AMD is licensing the ARM Cortex-A57 design while Applied Micro is a full licensee and is therefore able to make a custom core so long as it maintains compatibility.

Applied Micro is also working to integrate its X-Weave switching, which will provide 10 Gb/sec and 100 Gb/sec networking between X-Gene nodes, into its systems, and added that this switch will support Remote Direct Memory Access (RDM) over Ethernet using the RoCE protocol.

EnterpriseTech will be talking to Applied Micro about exactly how the X-Gene chips and X-Weave interconnect will be implemented and how far it will scale. Stay tuned.