Disney and the Details: 400,000 Hairs on a Field Mouse

Creating the make-believe world of Disney Animation is no doubt the result of arduous work. Still, it’s harder than you might think, consuming Top500-class supercomputing resources that produce an eye popping level of detail (400,000 hairs on the rendering of a field mouse), which comes together to deliver a convincing sense of reality-based fantasy that has driven the creation of 56 animated feature films.

Take “Zootopia,” a comedy-adventure film released earlier this year in which animals rule the earth. In a single, devilishly complex outdoor shot, there’s an island with 39 million blades of grass, a bear with a million hairs, 90 million hairs on each giraffe and 400,000 on the aforementioned mouse. Or “Moana,” Disney’s latest, an ocean-going adventure story that’s laden with large-scale simulations of waves, boat wakes and spray coming off surf (including "performance water" – water that takes on a human personality), some of which are comprised of billions of particles.

“It’s a unique blend of technology and artistry which creates the magic,” said Rasmus Tamstorf, a senior research scientist at Disney Animation of Burbank, with 900 employees. Tamstorf has worked on 17 feature films and has expertise in cloth, hair and soft tissue simulation. The complexity, cost and supercomputing Disney commits to animated movies was the topic of a presentation at SC16 last week in Salt Lake City.

At the core of the Disney Animation philosophy is to “tell compelling stories in believable worlds with appealing characters,” Tamstorf said. “In each of these areas it helps to have a foundation of reality in order for us to have something (the audience) can relate to.”

At the story level, this means building an emotional core around familiar themes of love, friendship and loyalty. “But similarly, when it comes to building the believable world and the appealing characters, we increasingly rely on simulation for at least emulating the real world.”

And that takes compute power – a lot of it. But despite being known for innovation and technology adoption, Disney rejects the use of GPUs in the development of animated films:

“We’ve looked at GPUs, but ultimately no, we’re not using them, partly because they’re a pain in the neck to deal with from a developer point of view and from a code maintenance point of view,” Tamstorf said. “We constantly innovate, we constantly change our code, and in practice when we’ve tried maintaining the code on the GPU it has been too much effort compared to how much gain we got out of it. Will that change? I think so. Maybe we need to go back and look at it again. But the gain vs. pain has not been big enough.”

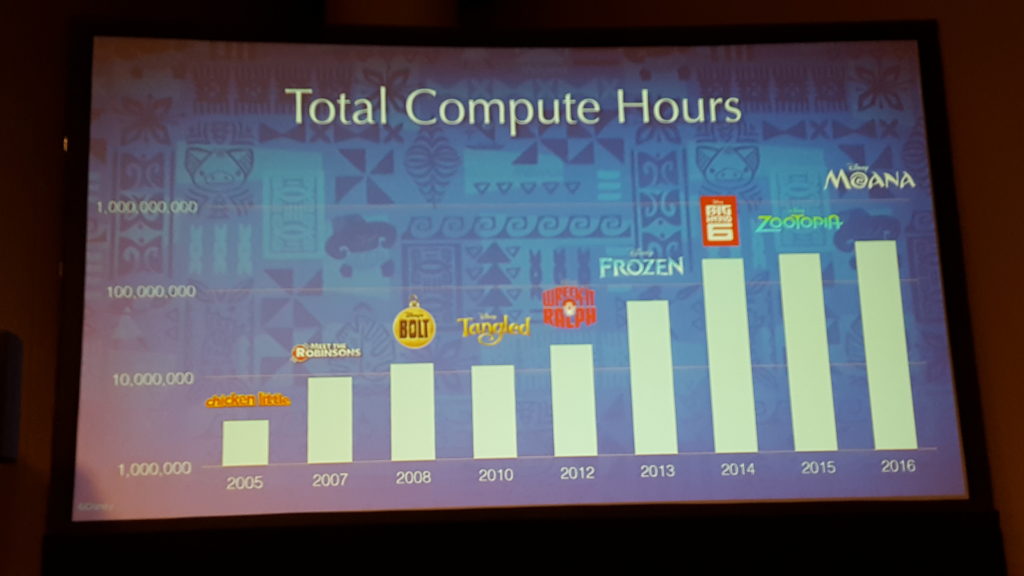

Disney relies on continually growing clusters of dual-socket Intel Xeon-based servers running on Red Hat Linux EL7 with Ethernet connectivity to power both home-grown and commercial CFD and animation software (such as Autodesks’s Maya). The compute hours required to produce Disney Animation films are on the rise: in 2005, “Chicken Little” consumed a mere 5 million hours, dwarfed by “Moana” at several hundred million.

“Over the years, what has really evolved in the movies is the amount of complexity that you’re getting,” said Tamstorf. “These compute hours are basically for everything – rendering, simulation, compositing, editing. But by far the biggest chunk is rendering, that’s the expensive part.”

That complexity is seen when a film is broken down into its component parts: each movie has three acts, each act is made up of multiple sequences that are comprised of shots, each of which is broken into frames totaling about 100,000 per movie. Those frames have 16 layers. The result is “million-way parallelism,” intense computational demand.

Tamstorf cited a particularly complex shot from “Moana” that, had it been rendered on a single core, would have been completed in 27 years. Granted, 111 frames are run in parallel, so each shot took “only” 88 hours, but that’s still more than three days. Overall, the average shot in the movie required 12 hours. “That means 12 hours to do one iteration of the workflow. So that’s expensive.” And slow.

Given these requirements, Disney Animation’s three data centers are defined by what Tamstorf calls “the science of maximizing throughput.” Rather than the traditional supercomputing objective of running a single large job at a time, he said, “we just need to get as much through the whole data center over time as possible.”

This means extracting parallelism out of workloads by running many jobs on single nodes while continually adding more cores. Put on a trend line, Tamstorf said, the number of movie frames (100,000+/-) and data center cores are rapidly converging.

The computing future for Disney Animation: more HPC. The goal: getting to real-time iteration of renderings and, secondarily, adoption of higher resolution imaging.

“The really important thing is that HPC has the potential to transform many of the workflows that we have,” Tamstorf said. “The creative process can be greatly improved by faster compute.

“If I take (a workload)from three days down to maybe five hours,” he said, “then at least I can do an iteration in the morning, I can look at the result before I go home at night, I can take it off then by the time I come back in the morning I’ll have something new. So I can work in a different way. If I can take it one step further, maybe down to a few minutes where I’m willing to stay at my desk, wait for things to come back and iterate again… And then if I can take it up one more notch I’m at the point where I can really work in real time. I can interact with the artistry. And that opens up so many doors.”

This requires “strong” scaling – the ability to solve a fixed-size problem on a bigger machine. Unfortunately, Tamstorf said, most supercomputing work is based on weak scaling: continually increasing the size of the problem. “What I want to see is a fixed-sized problem run a lot faster.”

This requires “strong” scaling – the ability to solve a fixed-size problem on a bigger machine. Unfortunately, Tamstorf said, most supercomputing work is based on weak scaling: continually increasing the size of the problem. “What I want to see is a fixed-sized problem run a lot faster.”

Tamstorf said he has worked with college students on the scaling issue using “Edison,” a Cray supercomputer at the National Energy Research Scientific Computing Center in Berkeley, to run cloth simulations on

“Cloth simulation is difficult because you’ve got a lot of layers of contact, and where you have contact you’ve got extra compute,” he said. “So to make this scalable, you need to be able to dynamically load balance and adapt to what the simulation is doing.”

Tamstorf said they saw good results with the Cray machine using the Charm++ parallel processing system, getting “perfect” linear scaling up to 100 cores. Eventually, the team ran 768 cores, though the scaling fell away from perfect.

“This is a very fine-grain problem and that actually makes me very hopeful because I think it means we can potentially apply it to a lot of our problems,” Tamstorf said. “Even if we’re not running massive scale problems, we can still get good value out of scaling out on a traditional HPC architecture.”