Violin Turns Flash Arrays Into Blazing Clusters

What is the fastest machine to run the new Windows Server 2012 R2 operating system from Microsoft? Ironically, it could turn out to be a storage array, and more precisely, a flash-based 6000 Series Memory Array from Violin Memory.

Getting fast persistent storage near compute capacity is the key to accelerating performance and is perhaps more important today than adding cores and clocks in the CPUs. Persistent storage will be faster than spinning disks by many orders of magnitude, and that ultimately means processors can be fed enough data through their memory buses and cache hierarchies to run at higher utilization.

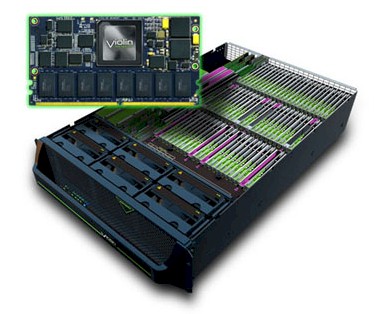

Violin sells flash-based PCI-Express cards for servers, which can help speed them up, but it has cooked up another option: Running Windows Server on the flash array itself. Or, better still, a cluster of such arrays.

Narayan Venkat, vice president of products at Violin, tells EnterpriseTech that the company has been working closely with Microsoft for the past two years. The two worked to make sure the Violin memory arrays were compatible with Windows Server, but then they hit upon the idea of using the array itself as a server.

Like most storage arrays these days, the Violin Memory Arrays are really just a server that is crammed full of lots of storage devices and clever software to do data protection, thin provisioning, caching, tiering, and other functions that make the storage devices more efficient. In the case of the 6000 series, the machines have two dual-socket X86 server nodes inside their 3U enclosures, which have 12 or 16 cores each depending on the model. The nodes use Intel's Xeon E5-2600 processors and have up to 128 GB of DDR3 main memory per board. They will be upgraded to the new "Ivy Bridge-EP" Xeon E5-2600 v2 chips in short order. The memory is used to store metadata for the flash arrays.

These embedded servers are used to run a portion of Violin's Memory Operating System to manage wear leveling, data striping, and other routines to get around the wear issues with flash memory. (You can only read flash a certain number of times before it fizzles; Venkat says that Violin has architected its arrays so you can re-write all of the data cells inside an array every day and it will still hold up for five years.) Depending on the machine, it has from 20 to 60 of the Violin flash memory cards, with four hot spares.

A portion of that Memory Operating System runs on field programmable gate arrays inside the chassis, which accelerates the calculations needed to manage the flash memory as well as doing garbage collection and error and fault management. The Xeon processors run some LUN virtualization.

Starting with Windows Server 2012 R2, which Microsoft put out this week, Violin is allowing for Windows to be booted onto these internal server nodes and run either in bare-metal mode or on top of Microsoft's Hyper-V server virtualization hypervisor. This, says Venkat, will give Windows Server workloads that are I/O intensive a shot in the arm. Instead of taking a millisecond to move data from the Violin arrays over a Fibre Channel switch or iSCSI wire linking the server, the server is in the storage array, linked directly by PCI-Express 3.0. That drops the latency for data transfers down to hundreds of microseconds.

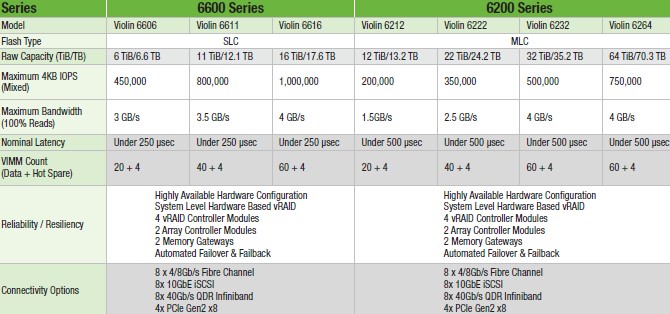

Venkat says that the price of a high-end 6200 Memory Array with 64 TB of flash capacity will sell for around $4 to $5 per GB. This machine delivers 750,000 IOPS of aggregate throughput on a mixed read/write test using 4KB block sizes. (The lower capacity 6600 Series tops out at 16 TB, but delivers 1 million IOPS.) Up to eight of these arrays can be linked together using 40 Gb/sec Ethernet or 56 Gb/sec InfiniBand, creating a sixteen-node cluster with something on the order of 6 million IOPS, 512 TB of capacity, and it costs on the order of $2.35 million at list price. That may be an expensive server cluster, to be sure, but buying arrays with the same IOPS capacity using spinning disk would be very, very expensive too, when you consider that a disk drive yields around 300 IOPS. In fact, says Venkat, it would be about the same price.

Such a flash array cluster is interesting, says Venkat, because it will radically cut down on I/O wait times and therefore the cluster can either do a lot more work or do the same work with a lot fewer cores. According to early benchmark test results from Violin and Microsoft, the cluster of flash arrays can do something on the order of three or four times the work for the same core count as a similar-sized external cluster or do the same work with one third to one quarter of the cores. Having fewer cores means needing fewer software licenses, and that also cuts back on the overall costs.

Early uses for such a cluster of Violin flash arrays running Windows Server 2012 R2 natively include running SQL Server database clusters, perhaps for building a faster data warehouses or to drive applications. The flash array cluster could also be used to create a private cloud where I/O sensitivity was an issue, perhaps for virtual desktop infrastructure (hosting virtual PC images on servers and pushing their screens out to thin clients).

A cluster of these Violin arrays can also be used as back-end storage, linking to servers with Microsoft's SMB Direct over InfiniBand or Ethernet, providing very zippy storage performance – at least compared to spinning disks.

The support for running Windows Server 2012 R2 natively on the Violin 6000 Series arrays is in early access now, with general availability expected in January 2014. Venkat says that all of the Violin Memory Arrays will eventually be able to support this function across the product line.

Given that the Memory Arrays are using standard Xeon E5 processors, there is absolutely no reason why the machine could not be loaded up with Linux or Solaris if customers asked for it. It would come down to Violin working with the Linux community and Oracle to write drivers to let the operating system talk over the internal PCI-Express bus to the Violin flash memory. The arrays already support attachment to external Linux and Solaris servers, as well as machines running AIX, HP-UX and OpenVMS, which do not run on X86 iron.