Dell Preps Fluid Cache SAN Accelerator For June Launch

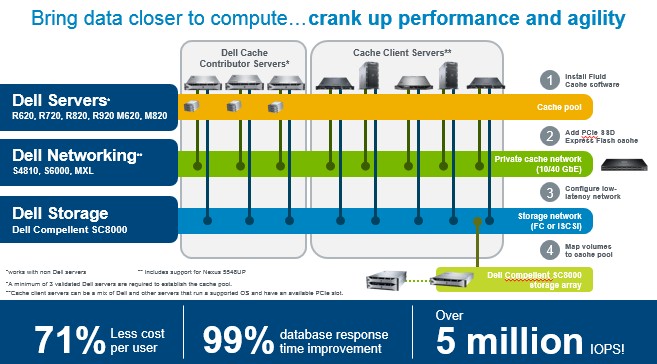

The Fluid Cache for SAN acceleration software that Dell has created to boost the performance of its Compellent storage area network arrays is closer to coming to market. Dell previewed the mix of hardware and software at its Dell World trade show in December last year, and is now providing some more technical details, pricing, and availability dates for the setup.

The Fluid Cache software is based on memory clustering technology that Dell got when it acquired RNA Networks back in June 2011. RNA was one of a few companies that was working on ways of ganging up multiple X86 systems with memory sharing protocols and other virtualization technologies. (Virtual Iron, now part of Oracle, was one, and ScaleMP is another and, significantly, the last free-standing member of the pack.) RNA initially created a memory clustering technology that worked across clusters in an effort to speed up messaging applications, but Dell has tweaked it for its own purposes. Specifically, Fluid Cache, derived from RNA’s code, is used to create a shared memory pool of flash storage that resides physically on servers and yet front ends the disk capacity on the company’s Compellent SAN arrays.

There are several interesting aspects to this Fluid Cache setup, and one is that the Compellent storage arrays remain in control of what data is stored in the flash drives in the system and therefore applications and operating systems do not need to be tweaked to make use of Fluid Cache. That does not mean that Dell has certified any and all operating systems and hypervisors to make use of Fluid Cache – it has not. Brian Payne, executive director of PowerEdge marketing at Dell, tells EnterpriseTech that the company is initially focusing on accelerating database workloads running atop Linux and virtualized environments – particularly virtual desktop infrastructure – running atop VMware’s ESX hypervisor. So, when the Fluid Cache for SAN software is available in June, it will initially only support Red Hat Enterprise Linux 6.3 and 6.4, SUSE Linux Enterprise Server 11 SP3, and VMware ESXi 5.5. Payne says he is not going to preannounce platforms and therefore would not talk about running Fluid Cache in conjunction with Windows Server 2012 and Hyper-V 3.0. But Payne did say that making Fluid Cache work with an operating system or hypervisor did not require access to their kernels and therefore just about any option you can think of is possible.

The Fluid Cache software writes the data to two drives in a server node and waits until the second copy is written before letting the system know that the data has been committed. Eventually, the Compellent array grabs the data and moves it to its disk drives for formal safe keeping. For reads, the Compellent array pushes out the hottest data to the cache server nodes, and it is smart enough to know which node to push data to based on the work that node is doing. If you run you workloads on the same servers that have the Fluid Cache software and Express Flash drives you get the low latency benefits. But if you want an external server to have access to the cached data as well, there is a Fluid Cache client that can be run on those machines; the client software only runs on Linux at the moment. Each node in a Fluid Cache cluster has a cache manager and metadata manger program that runs on it. At any given time, only one copy of these two programs are running and in charge, but the extra copies are there in the event that the primaries fail and can take over.

As EnterpriseTech told you back in December, Dell was looking forward to getting new Express Flash modules for its PowerEdge servers out the door before putting the Fluid Cache for SAN software out for sale. And indeed, those new Express Flash units, based on new flash drives from Samsung and sporting the NVM Express protocol that Intel, Dell, Oracle, Cisco Systems, EMC, Seagate Technology, Micron Technology, NetApp, SanDisk, and others have been working on, are available here in April as we expected them to be. These new units have a PCI-Express 3.0 connector, rather than a slower 2.0 connector, which increases their bandwidth for piping data between the CPUs in the server and the Compellent arrays. NVM Express is one of those protocols to get a peripheral out from under the overhead from operating system drivers; having done so the latency is cut by about 33 percent.

The original Express Flash drives came in 2.5-inch form factors and were available in 175 GB and 350 GB capacities using SLC flash. The new NVMe flash drives use MLC flash and they come in the same 2,5-inch, hot plug form factor but with variants aimed at mixed use, read intensive, and write intensive workloads. Here is what the lineup looks like:

- 400 GB, Mixed Use: $2,300

- 400 GB, Write Intensive: $2,900

- 800 GB, Read Intensive: $3,000

- 800 GB, Write Intensive: $5,700

- 1.6 TB, Read Intensive: $5,600

- 1.6 TB, Mixed Use: $7,300

Earlier cache media, including the 175 GB and 350 GB Express Flash drives (based on SLC flash) can be used in the pool, as can the M420P MLC SSDs that come in 700 GB and 1.4 TB capacities from Micron Technology. The latter can be used in non-Dell servers if customers want to load the Fluid Cache for SAN software on machines other than PowerEdges. Dell is obviously not recommending that. The company has certified its PowerEdge R620, R720, R820, and R920 rack servers and its M620 and M820 blade servers.

The servers in the Fluid Cache for SAN pool are connected to each other by ConnectX-3 host adapter cards from Mellanox Technologies and they make use of the Remote Direct Memory Access over Converged Ethernet (RoCE) protocol to quickly move data between the server nodes in the cache cluster without having to hit the sluggish operating system kernel. The 10 Gb/sec and 40 Gb/sec adapters are available for racks and the 10 Gb/sec mezzanine card is available for blades. Dell has certified its own Force10 S4810 (10 Gb/sec) and S6000 (40 Gb/sec) switches for linking the server nodes together as well as its own MXL blade (10 Gb/sec) for its blade servers. The Nexus 5580UP switch module for the UCS blade system from Cisco Systems is also supported.

The Fluid Cache for SAN software costs $4,500 per server node in the cache pool. You can get a three-node license for $12,000, according to Payne. The prior generation of Fluid Cache for DAS, which as the name implies was used to accelerate the reading and writing of data to direct-attached storage, cost $3,500 per server.

At the moment, a Fluid Cache for SAN setup requires at least three server nodes and Dell has only officially supported clusters with up to eight nodes. The RNA software was designed to scale across 128 nodes, but Dell is not interested at pushing the scalability that far – yet. But it will, particularly if customers do the pushing. For now, Payne says that an eight-node setup provides enough caching for the workloads that Dell is targeting.

The Fluid Cache software can be used to allow machines to do more work, do the same work more quickly, or a mix of the two. So, for instance, in Dell’s lab environment, a three node cluster of Oracle databases was able to support about 500 users with an average response time of around one second for a bunch of online transaction processing transactions. This baby cluster cost $331,696 at list price, presumably not including the cost of the Compellent SAN. Adding Fluid Cache for SAN to the three clusters and the NVMe flash drives boosted the price of the baby cluster to $360,191 and the machine could support 1,900 concurrent users at the same one second average response time. That is nearly a factor of four increase in the throughput of the cluster for an incremental 8.6 percent in costs. (The cost per user drops from $663 to $190 is another way of looking at it.)

From the early benchmark data, it looks like as the number of server nodes in the cache pool is increased, the increase in the throughput of the systems grows, but not linearly (no doubt because of the difficulty of coordinating across the nodes). A three-node system shows around a 4X improvement in the number of concurrent users, while an eight-node setup shows a 6X increase. That said, on one OLTP test, a three node cluster running an OLTP workload supported 425 users with no Fluid Cache enabled and an eight-node setup with Fluid Cache was able to handle 12,000 users. That is a factor of 10.6X more scalability, and because it can be done with fewer servers (it would take 28 servers to match this performance without Fluid Cache), the Oracle database costs will be lower on clusters using Fluid Cache.

If dropping response times for transactions is the main goal, then this is how the initial performance tests for Fluid Cache turn out. On a three-node Oracle database cluster, the average response time for a bunch of OLTP transactions was on the order of 1.5 seconds; turning on Fluid Cache and installing the necessary hardware reduced that OLTP response time by 97 percent to 45 milliseconds. On an eight-node setup running the same tests, the cluster could do a certain amount of work with an 800 millisecond average response time. Turning on Fluid Cache slashed that to a mere 6 milliseconds.

Finally, in a related development, Dell has tweaked the Storage Center operating system at the heart of the Compellent storage arrays. With the 6.5 release, Dell is boosting the total addressable capacity of a Compellent SAN from 1 PB to 2 PB. The storage software stack now adds data compression, which can reduce disk requirements by an average of around 77 percent. Dell has also added a synchronous version of its live volume replication for Compellent SANs, which does replication between two SANs at a maximum distance of 50 kilometers. The prior live migration for volumes did the replication asynchronously, which means that data could, in theory, get out of synch between the primary and secondary arrays. However, replication was available over a much longer range, and that is sometimes a tradeoff companies need to make for disaster recovery purposes.