AWS Adopts Docker Containers For Elastic Beanstalk

The Docker application container technology that is emerging as a new method of packing and running applications on top of Linux servers has gotten another important blessing as it moves from an interesting project to a production-grade tool that can be used by enterprises. Amazon Web Services is now allowing for Docker containers to run on top of its Elastic Beanstalk service.

Elastic Beanstalk is a load balancing and provisioning system that runs atop Amazon's EC2 compute cloud that automates these procedures rather than having such tasks performed by people. It is a supplemental service for EC2 and other infrastructure cloud components and as such it is something that delineates AWS from other public clouds and it is also something that helps drive revenues for AWS.

AWS uses its own variant of the Xen hypervisor to carve up instances on the EC2 cloud. The Elastic Beanstalk service was meant to provide a slightly higher level of abstraction, as Amazon CTO Werner Vogels explains in a blog post, and started out three years ago supporting Java applications on top of the Tomcat server. These days, Elastic Beanstalk supports six different application containers – Java/Tomcat, PHP, Ruby, Python, .NET, and Node.js – running across eight AWS regions, and now the seventh is being added with Docker. A few weeks ago, Amazon announced that its homegrown variant of Linux allowed for Docker containers to be loaded up on top of it when running inside of Xen partitions on the EC2 compute cloud. (This Amazon announcement explains how to use Docker in conjunction with the Elastic Beanstalk service, and this AWS documentation goes into even more detail.)

For those of you not familiar with software containers in general or Docker in particular, Docker is an application repository and change management system that runs in conjunction with Linux containers. It solves a complex matrix problem that the shipping container similarly solves for moving manufactured goods around the world. Before the multi-modal shipping container was invented, manufacturers had to consider how different items might affect each other, how they needed to be lifted and moved, and how they might be packed into ships or trucks. Once the shipping container was standardized, products could be isolated from each other – for instance, food and industrial machinery – and yet be put on the same ship or stacked up in the same port. Moreover, containers could be hauled around on ships or lugged around by trucks, providing quicker and more efficient portability of manufactured goods.

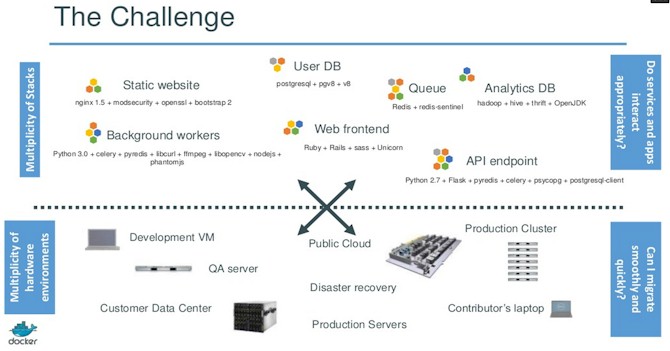

The same matrix hell that used to affect manufactured goods now afflicts software running in the datacenter:

There are so many different software stacks, and variations of these on top of that, running across disparate physical and virtual servers, both inside the enterprise and outside in the clouds, that programmers and system administrators are spending more time tracking changes than they are doing useful work. With Docker, the idea is to create a software analog of a shipping container, whereby the application developer only worries about what goes inside of the container and has an automated means of controlling and distributing changes to the code that runs inside groups of containers and the system administrator only worries about the outside of the container and making sure that the machines, virtual or physical, provide what is necessary for running that Docker container.

Docker implements what used to be called a virtual private server for Windows and Linux machines and what Sun Microsystems used to call a zone or container with its Solaris Unix variant. IBM called it a workload partition with its AIX Unix variant and Hewlett-Packard called it a HP-UX Containers for its Unix flavor. The idea remains the same no matter what you call it. Instead of putting a heavy-weight hypervisor on a machine and then carving it up into virtual machine partitions, each with their own full operating system and application stack, containers take a single operating system kernel and file system and carve it up into logical runtimes that look and feel like a full, complete instance of the operating system as far as the applications and security settings of each container are concerned. (There is a very detailed explanation of Docker at this link.) Docker is a bit different from these other containers in that it actually implements a separate root file system for each container.

The interesting thing about Docker is that it allows for modifying applications running inside of containers. So, for instance, you can package up the application code as well as Linux binaries and libraries and ship those around as a unit. Or you can just wrap the container around the application itself. You can also modify an application and package up the changes to that application and its binaries and libraries and distribute just those changes to existing Docker containers to update code. So this is a bit more sophisticated than running LXC containers on top of Linux. The other important thing is that if a developer creates code that runs on a Linux instance, that means it can run on any KVM or Xen virtual machine, LXC container, or bare-metal Linux that has the right binaries and libraries installed, and Docker is the means to make sure they are, in fact, installed.

The Docker project was started by dotCloud, a platform cloud provider frustrated by application portability issues. At the moment, Docker is in beta testing and is only at the 0.10 release level so it is definitely not production grade. That doesn't mean bleeding edge companies are not using it. (eBay and Cambridge Healthcare are, to name two companies that just can't wait.) The new Ubuntu Server 14.04 LTS release from Canonical, announced last week, supports Docker containers and so does the forthcoming Enterprise Linux 7 from Red Hat. And now, so does EC2 and Elastic Beanstalk.

It will be interesting to see what Microsoft does for Windows Server and the Azure cloud to create an analog for Docker. Companies definitely want a lighter-weight virtualization layer for some of their applications and companies operating at extreme scale most certainly want a better mechanism for distributing code changes across their infrastructure.