HP Forges Apollo 6000s For Single Thread, Scale Out Jobs

Back in November last year, EnterpriseTech gave you the inside scoop on the massive 610,000 core server fleet that Intel uses to push Moore's Law along with its electronic design automation software. The chip maker told us that it was shifting from two-socket to uniprocessor servers to save money on software licenses and to drive up the performance of those clusters. The reason was that the Xeon E3 processor family tends to be ahead of the Xeon E5s in any given generation and microservers based on these machines can be used to cram more – and faster cores – into a given space, and for less money, than a set of Xeon E5 processors with a similar number of cores.

As it turns out, Intel was not just shifting to microservers based on its own Xeon E3 processors. It was helping Hewlett-Packard conceive of and design the new Apollo 6000 hyperscale systems that the server maker has just debuted at the Discover 2014 extravaganza in Las Vegas. It is these very machines that Intel is deploying to goose the performance of its EDA cluster as it shifts away from two-socket blade servers manufactured by a number of tier one server players.

The Apollo 6000s are designed to be bought and deployed at the rack scale, explains John Gromala, senior director of hyperscale product management for the HP Server group. The machines consist of processor sleds with multiple server nodes per sled, like many hyperscale designs have, including HP's own Scalable Systems 2500 and 6500 machines. But with the Apollo machines, HP has gone one step further and created a shared power shelf that can span up to six server enclosures, depending on the configuration and the power requirements of the processors inside of those Apollo 6000 enclosures. The power shelf is 1.5U high and supports N, N+1, and N+N configurations, depending on how much power redundancy customers want. The Apollo 6000 racks are, at 48U high, a little taller than the standard 42U rack, and that extra 6U of space gives room for four power shelves. (You can obviously put more than that in the rack.) The power shelves are rated at 2,650 watts or 2,400 watts, pick one.

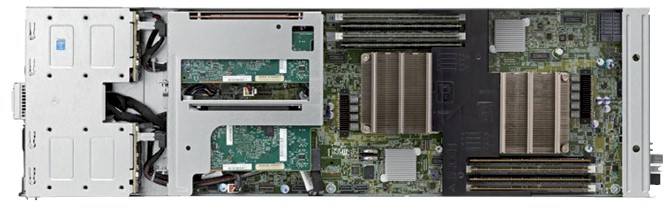

The Apollo a6000 chassis is 5U high and has room for ten processor sleds, which are called the ProLiant XL220s Gen8 v2 server. Each sled has two single-socket "Haswell" Xeon E3-1200 v3 processors, which have four cores that top out at 3.7 GHz (4.1 GHz in Turbo mode) and 8 MB of L3 cache. These Xeon E3 chips support 32 GB of main memory in four DDR3 memory slots, which is not a huge amount of memory, but for the single-threaded jobs that HP has aimed the Apollo 6000s at, the clock speed is much more important than the memory capacity. The sled has room for two 2.5-inch SAS or SATA drives per node, which can be spinning disk or flashy solid state drives. All of the components on the sled are glued together using Intel's "Lynx Point" C222 chipset.

The XL220s Gen8 v2 sleds do not include their own networking interfaces on the system board. Rather, there is a midplane in the chassis that the sled plugs into for power and networking, and HP's flexible LAN-on-motherboard adapters snap into the back of the enclosure to provide networking. This way, the networking is physically distinct from the serving. At the moment, 1GbE and 10GbE adapters are available; InfiniBand could make its way there, too. The server sled has two low-profile PCI-Express 3.0 slots for peripheral cards.

The Apollo 6000 design will eventually have sleds for various kinds of accelerators – Gromala would not be precise, but Intel's Xeon Phi X86 coprocessors and Nvidia's Tesla GPU coprocessors are the obvious choices for some workloads – as well as sleds for storage that can be linked to the compute elements.

Fully loaded, the Apollo 6000 can have up to eight enclosures and four power shelves in a rack, which yields a total of 80 sleds, 160 nodes, and 640 cores per rack. This, says Gromala, has 20 percent more raw performance (as gauged by single-threaded workloads like EDA software) than a rack of Dell M620 blade servers using Xeon E5 processors in its PowerEdge M1000 enclosure. The Apollo 6000 setup also consumed 46 percent less power and takes up 60 percent less space to deliver those cores. Over the course of three years, HP calculates that the Apollo 6000 setup would have $3 million less in total cost of ownership compared to a Dell PowerEdge blade configuration with 1,000 nodes.

Gromala did not come to the interview with specs comparing the Apollo 6000s to HP's own BladeSystem machines. We asked, and HP is getting back to us on this and the feeds and speeds of the price comparison outlined above.

Kim Stevenson, Intel's chief information officer, said in the datasheets for the new machines that Intel has 5,000 of the servers already deployed in its fleet and they these machines run its EDA software 35 percent faster than the Xeon E5 processors (which span several generations) used in its massive server fleet.

The Apollo 6000 is not just aimed at EDA software, and in fact, will be appropriate for engineering, physical sciences, and financial modeling workloads where workloads are broken up and run across multiple threads in a serial fashion as part of the simulation.

The Apollo 6000 system will be generally available in the third quarter and pricing will be announced at that time. The most recent releases of Microsoft's Windows Server, Red Hat's Enterprise Linux, and SUSE Linux's Enterprise Server are supported on the machines.