IBM DOME Microserver Could Appeal To Enterprises

An experimental microserver system developed by IBM in conjunction with the Netherlands Institute for Radio Astronomy has the potential to end up in commercial systems running massively parallel workloads. So hopes Ronald Luijten, a system designer at IBM Research in Zurich, Switzerland, who was showing off the initial microserver for the DOME project to government officials last week.

ASTRON, as the institute is also known, is working with IBM researchers on the DOME project, which is developing the computing, storage, networking, and algorithms that will be necessary to support the exascale-class data streaming, signal processing, and visualization that future radio astronomy using the Square Kilometer Array (SKA) requires. Like other technologies developed at the Zurich labs, there is a good chance that the microservers that are ultimately deployed at ASTRON a few years hence will find uses in commercial datacenters, particularly those that have some of the same power and thermal constraints that a large supercomputing center has. So the five-year, €32.9 million partnership between IBM and ASTRON is not just about radio astronomy. The SKA is expected to be operational by 2024 and will need several exaflops of computing to run the array and process its data.

This gradual commercialization, explains IBM, is precisely what happened with the water-cooled project at the Zurich labs that ultimately lead to the creation of the €80 million, 3 petaflops SuperMUC machine at the Leibniz Supercomputing Centre (LRZ) in Garching, Germany. It started out as a university research project, was picked up by LRZ, and was eventually commercialized by IBM's System and Technology Group.

Luijten tells EnterpriseTech that he began his journey to microservers – minimalist computers with all of their components crammed as close together as possible to conserve energy and cost – four years ago. As a rule, IBM Research tends to look at time horizons from three years to three decades ahead of today, and in the case of the microserver research that Luijten and his colleagues are working on, they are looking at the kinds of data processing technologies that will be needed five to eight years out.

"This, to me, looked like something that might disrupt the industry, and now that I am four years down the road from when I got this insight, I can only say that microserver technology will disrupt this industry," says Luijten. "I don't know in what way or form, but it is going to be a huge change. The DOME project is not yet at the stage where I can definitely say that IBM will go and commercialize this technology, but it is definitely something that might happen." He adds that the DOME team is a couple of months away from getting the initial commercial partners and the executives at Systems and Technology Group on board to undertake such an effort.

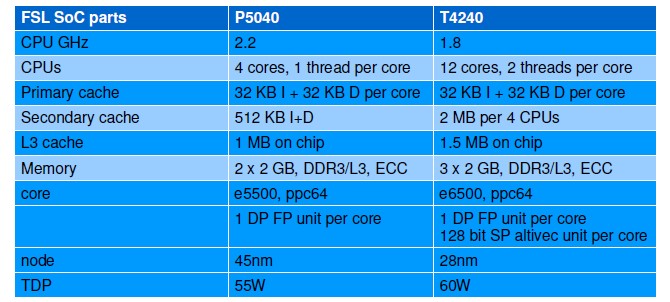

The DOME project is essentially coming up with what Luijten calls a poor man's BlueGene/Q massively parallel system. Instead of the eighteen-core PowerPC A2 chip made by IBM and its proprietary 3D torus interconnect as well as a custom Linux kernel, the next iteration of the DOME microserver will be based on the Freescale Semiconductor T4240 processor, will use standard Ethernet networking and SATA peripheral interfaces, and run an off-the-shelf Linux. The prototype DOME microserver was based on the Freescale P5040 chip, which was much less impressive. Here are the feeds and speeds of the two Freescale processors that the DOME team has built server nodes from:

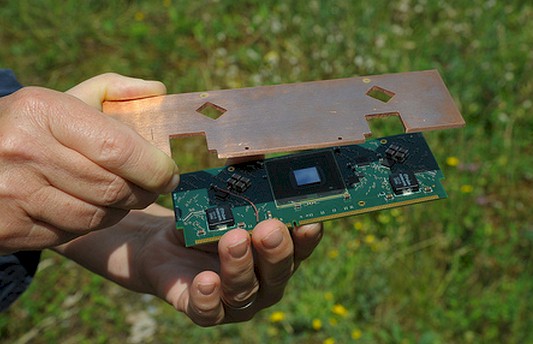

With many microservers, system builders use the PCI-Express mechanicals to link the server nodes to power and to the midplanes or backplanes in their enclosures, but the IBM Research team chose a fully-buffered DIMM memory slot as their means of mating a microserver to the chassis and therefore linking it to the outside world. This form factor is only 133 millimeters wide, so it is also smaller than the PCI-Express slot. And the first generation DOME microserver is only 55 millimeters high compared to the 30 millimeters of a FB-DIMM memory chip. This is considerably smaller than the microservers developed to date using ARM and X86 processors and is also quite a bit smaller than the PowerPC A2 node inside of the BlueGene/Q massively parallel system.

The DOME microserver has a copper heat spreader that is used to take the heat away from the Freescale Power SoCs and the capacitors on the system board. And cleverly, the same copper plate is also used to provide electrical power to the microserver node from the chassis. Copper is an excellent conductor of heat and electricity. The copper plates hook into heat exchangers that bring water into the DOME microserver enclosure that is at 50 degrees Celsius and it exits at 55 degrees Celsius. (The junction between the copper plate and the SoC is 85 degrees.) This modest 5 degree difference is enough to keep an enclosure with 128 compute nodes in a 2U enclosure cool. And equally importantly, the resulting hot water is a commodity that can be used for other purposes or sold, perhaps to heat office space.

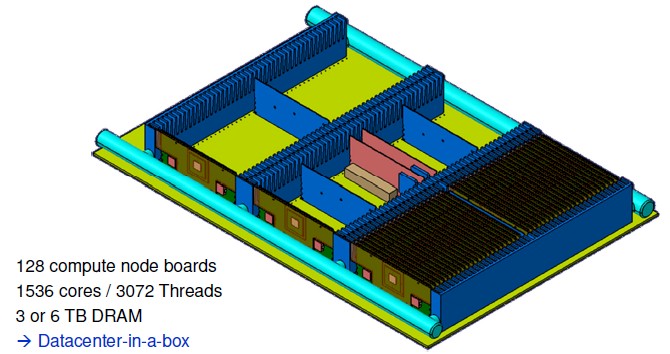

Here is what the mechanical for the hypothetical DOME microserver enclosure, what Luijten calls a "datacenter in a box," looks like:

When equipped with the Freescale T4240 processors, that DOME microserver enclosure will have a total of 1,536 cores and 3,072 threads with either 3 TB or 6 TB of memory spread around the nodes. Luitjen anticipates that this enclosure will be ready in the first quarter of 2015.

Luijten has a very precise definition of what is required for something to be called a microserver. The power conversion, flash memory, and main memory chips that are part of a server all use different CMOS process technologies and have very different power and thermal requirements, and therefore cannot be integrated onto the system-on-chip (SoC). But every other component of the system, including networking, peripheral ports, and various workload accelerators can and should be integrated on the SoC. The reason is simple: the shorter the distances are between components, the less power is consumed to move data. Echoing statistics cited by Hewlett-Packard a few weeks ago when it launched The Machine, a memristor-focused microserver cluster lashed by silicon photonics, more than 90 percent of the energy consumed by systems is just moving data around from component to component, not actually doing any computation involving that data. Luijten says the number is more like 98 percent, and has coined the phrase "compute is free, data is not" to drive the idea home. Luijten also says that a microserver has to offer 64-bit processing and memory access, has to be based on commodity components, and has to run a real server operating system such as Red Hat Enterprise Linux 6, SUSE Linux Enterprise Server 11, or their equivalents. So if your machine doesn't meet all of those criteria – and no microserver sold to date does, by the way – then in Luijten's mind it is not a microserver.

It took Luijten and some people at Freescale about four month to get Red Hat Fedora 17 running on the initial P5040-powered Dome microserver. The operating system was up and running back in April on the second rev of the P5040 rendition of the microserver. Last week, the first rev of the microserver based on the T4240 processor was back from the manufacturer, and if he wasn't making a presentation to the Dutch government on the status of the DOME project, showing off the microservers and their water cooling, Luijten said he would have been in the lab playing with the new toy.

A couple of months from now, an eight-node carrier board will be back from the manufacturer with an integrated 10 Gb/sec Ethernet switch, power modules for the nodes and switch, as well as the water cooling for the hot components. This machine will be equipped with IBM's DB2 BLU parallel and in-memory database as well as the Watson question-answer software stack and tested to see what kind of performance it can deliver on commercial workloads.

"Once we have those proof points, then we can start having discussions on whether we want to commercialize this," says Luijten. "I am doing this because I want very high energy efficiency achieved by very dense packaging as well as very low cost.

The system board that IBM Zurich created for the first pass of the DOME microserver cost about $500 for a single unit, including the processor and 4 GB of main memory plus the circuit board and the copper plate. Luijten is not sure what the cost of the T4240 board will be yet, but he estimates that it will be something on the order of $650 for the bill of materials for the components. As for the DOME enclosure, the most expensive component is the quick-connect plumbing for the water cooling, but with 128 nodes in the box, the cost of the chassis quickly gets dwarfed by the cost of the compute nodes. Maybe it might cost around $10,000 for the enclosure, but 128 compute nodes could cost $83,200.

While IBM has designed its own system boards using the Freescale processors as part of the DOME project, it did not go all the way and create its own Power SoC. But that is not out of the realm of possibility, particularly with Big Blue opening up the Power8 architecture through the OpenPower Foundation.

"If you would be helpful and get around $75 million in my direction, I know exactly what kind of SoC I would build," jokes Luijten. "My hope is that in the OpenPower Foundation, we will have an activity to build an SoC, in my definition, and with a Power8 core. I think that would kick ass. That core is a very nice thing, and I think that would be fantastic."

And if enough customers who are willing to pay for a system that is based on the ideas embodied in the DOME microserver materialize, this is precisely the kind of machine that IBM would be inclined to move from science project to commercial product. Time will tell.