HGST Shows Off Zippy PCM Prototype, Updates Flash Cards

HGST, the disk drive and NAND flash storage device maker that is now part of Western Digital and that is based on IBM’s former UltraStar disk business, is using the Flash Memory Summit to show off a prototype PCI Express card based on phase change memory, one of several contenders to eventually replace NAND flash as the non-volatile memory of choice. The company is also upgrading its FlashMAX flash cards and updating its ServerCache caching software to take on the open source alternative from Facebook.

Phase change memory is wickedly fast compared to NAND flash memory, but it is still quite expensive to manufacture and relatively low density compared to other media commonly used inside of systems and storage arrays. The data-storing component in PCM is essentially chalcogenide alloy, which has germanium, antimony, and tellurium in it and which can be induced into an amorphous or crystalline state by heating. A current passing through the chalcogenide can do the heating and induce either state, depending on the amount of heat and time; it takes on the order of 50 nanoseconds and high current to induce the amorphous state and 100 nanoseconds and lower and more controlled current over a longer period of time for the crystalline state. The important thing is that PCM can store data using either state, it can scale down to 20 nanometer processes, has long data retention, and can endure 100 million cycles.

PCM was developed initially for handsets and will very likely be commercialized for enterprise uses, much as has been the case with flash memory. Like other memory technologies, including resistive RAM, PCM has had its manufacturing issues. Micron Technology makes DRAM main memory and is working on 3D stacked memory and flash, but has also put out two generations of PCM. The current PCM memory from Micron, which was used in the prototype, is based on 1 Gb chips etched with 45 nanometer processes; they were originally used in Nokia smartphones and are not being sold at the moment. But don’t despair. Micron is working on a next generation PCM technology, due in 2015, that will have higher bit densities and lower manufacturing costs. A PCM kicker is slated for in 2016.

PCM memory is so fast that the new NVMe protocol, which was created to streamline the interaction between server operating systems and NAND flash and which is just starting to be deployed, cannot handle the speed, Ulrich Hansen, vice president of product marketing at HGST, tells EnterpriseTech. So the company’s engineers have been working with researchers the University of California at San Diego to come up with a new interface protocol that can handle latencies approaching 1 microsecond so that when PCM is commercially viable the interfaces will be tuned up to handle both the high bandwidth and low latency that PCM can drive. This protocol, which has been under development for years as part of the Moneta project, is not just being created to support PCM, but also other emerging non-volatile memory technologies that are also contenders. Just in case PCM does not pan out as expected. The new protocol is called DC Express and was introduced at the USENIX File And Storage Technologies conference in February this year. The important thing is that the DC Express protocol and the card design is media agnostic, meaning it is not tied to PCM non-volatile memory in particular. Importantly, the prototype card does not require any changes to the operating system kernel or the server BIOS to work.

HGST’s PCI Express PCM card does not have very much in the way of capacity, explains Hansen, with only a few gigabytes. But is has a random read access time of around 1.5 microseconds, which is more than two orders of magnitude better than NAND flash memory and putting it somewhere between DRAM main memory and flash in terms of speed. (This performance was achieved in a non-queued setup.) The prototype PCM card can deliver a stunning 3 million I/O operations per second in a queued environment using 512 byte files. The prototype is a full height, full length card with a relatively unimpressive PCI-Express 2.0 x4 interface. It is unclear how far HGST could push the IOPS with a faster PCI-Express 3.0 interface with more lanes, or how fast the density of PCM cards will rise as the technology matures and rides the Moore’s Law curve.

Hansen anticipates that it will take anywhere from three to five years for PCM memory to become an affordable option for the enterprise applications. But there are no doubt some financial institutions, government agencies, supercomputing centers, and other organizations with extreme latency sensitive applications who will probably look at the PCM card prototype and wonder if they can get their hands on a few of them to begin testing how they might be deployed to future accelerate their applications.

The density of the prototype card is not anything close to that of DDR3 main memory, with only 2 GB on the card, so for actual applications, DRAM is still faster and denser, but then again, when the power goes off DRAM, the data is gone and DRAM consumes a lot of juice. A couple of iterations of Moore’s Law and PCM cards could pack as much capacity as a NAND flash card from a year or two ago and offer similar economics and considerably zippier performance. PCM could do to flash what flash did to disk drives, adding another storage tier, and there is no reason to believe that it won’t end up in memory channel storage as well as in PCI cards and SSDs backed by controllers. There could even be hybrid PCM-flash SSDs that use PCM to offer fast read and write caches for NAND flash.

Updating The Flash

Last week, to get some of its news out ahead of the deluge of flash news coming out this week, HGST updated its UltraStar SAS SSDs. These UltraStar flash drives are developed under a partnership between HGST and Intel, which was established in 2008. Under that agreement, Intel supplies HGST with flash memory and some NAND flash management software, and the resulting family of drives is only available through HGST. The first generation of products had 6 Gb/sec SAS interfaces and the latest ones double that up to 12 GB/sec SAS links. The UltraStar SAS SSD line has three different levels of endurance, with read-intensive drives having 2 drive writes per day (DW/D), mainstream drives above to sustain 10 DW/D, and high endurance drives coping with 25 DW/D. All of the units have 2 million hours for their mean time between failure rating (which is as good as any disk drive) and a five year warranty. The high-endurance SSDs come in 100 GB to 800 GB capacities, the mainstream drives double that up to 200 GB to 1.6 TB, and the read-intensive drives offer 250 GB to 1.6 TB. The drives can sustain up to 1,100 MB/sec of throughput and have a read performance of 130,000 IOPS on random reads and up to 110,000 IOPS on random writes. They also sport secure erase and encryption.

The FlashMAX III cards introduced this week at the Flash Memory Summit come to HGST through Western Digital’s acquisition of Virident in September 2013 for $685 million. The new flash card comes in a half-height, half-length form factor that means it can fit into just about any server out there on the market. It is based on 20 nanometer NAND flash memory from Micron, and while HGST is not providing prices on the unit, it says it can deliver a random read IOPS for about $1, which is half the cost of the FlashMAX II generation announced last year. That improvement in price/performance is due to a combination of higher performing flash and lower prices, Hansen tells EnterpriseTech.

The FlashMAX III will come in 1.1 TB, 1.65 TB, and 2,2 TB capacities, and sports a PCI-Express 3.0 x8 connector, which is important because the more current PCI-Express has about twice the bandwidth of the 2.0 generation. The device can deliver sustained read bandwidth of 2.7 GB/sec and about 541,000 IOPS on 4 KB random reads; sustained write bandwidth is around 1.4 GB/sec with around 77,000 IOPS on 4 KB random writes. On a 70-30 mix of 4 KB reads and writes, the FlashMAX III handles about 200,000 IOPS. The FlashMAX III will be available in the third quarter. While customers can add multiple cards to servers to scale up their I/O and capacity, most hyperscale datacenters have resiliency and scale at the node level and tend to put one card in a node, says Hansen,

HGST is also home to two other flash-related acquisitions, Velobit and STEC. Both had created caching software to manage flash cache on servers and storage arrays to accelerate applications. The Velobit software had better algorithms and superior performance, according to HGST, while the STEC software had the best user interface and a better user experience. These have been merged together to create ServerCache 4.0, which also has additional features.

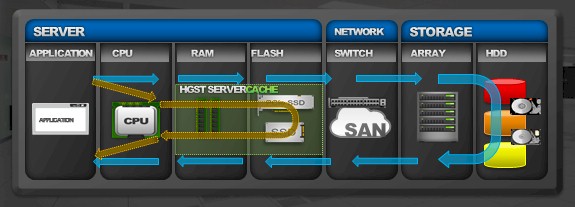

The ServerCache software works across any block-level storage device and runs atop either Windows Server or Linux. The CPU overhead of using the caching software is on the order of 5 percent, the company says. Fusion-io and SanDisk had independently created or acquired their own caching software (and now it is all under the SanDisk umbrella after SanDisk bought Fusion-io), and now SanDisk and HGST find themselves competing against the open source Flashcache created by Facebook. ServerCache has a number of different caching algorithms, including write through (data is stored on disk and read from cache), write back (often called persistent caching, which means writes are stored on the flash and lazily committed to the disk storage), warm (which means the cache is ready to go after a reboot and is not emptied), and sequential filtering (which means that big sequential I/O dumps like backups are detected and are written around the cache, not through it).

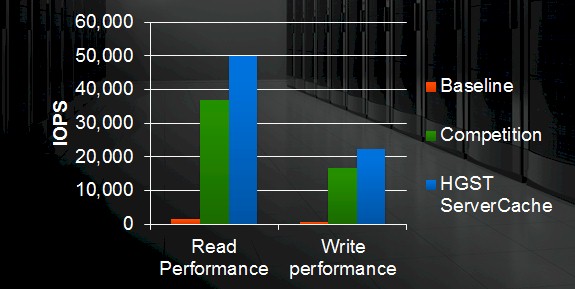

On a database benchmark test at a customer site running the HammerDB database testing tool in conjunction with PostgreSQL, the ServerCache 4.0 tool was able to deliver about 30X more performance than a baseline PostgreSQL database running atop spinning rust. The competition, which was unnamed, was bested by ServerCache 4.0 by about 35 percent. ServerCache 4.0 is available now and costs $995 per server node.

In case you haven’t figured it out, HGST might have been a disk drive maker like parent company Western Digital, but it takes non-volatile memory technologies very seriously. In its fiscal year ended this past April, the company booked $508 million in enterprise SSD sales, which is where all of the SSD, flash cards, and other related software revenues end up on the Western Digital books. Two years ago, before many of the acquisitions, this was a $65 million business, and in fiscal 2013 it hit $355 million. HGST has traditionally sold its SSDs and flash cards to OEM partners in the server and storage businesses, but Hansen says that the company has been picking up more and more business selling directly to hyperscale datacenter operators and cloud builders and is even seeing some direct sales to large enterprises building out their infrastructure to accelerate applications.