Intel Launches ‘Knights Landing’ Phi for Machine Learning, HPC

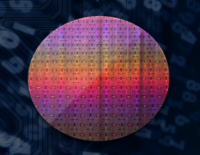

Source:Intel

From ISC 2016 in Frankfurt, Germany, Intel Corp. launched the second-generation Xeon Phi product family, code-named Knights Landing, aimed at HPC and machine learning workloads. The company had been shipping “Knights Landing” silicon to early customers for six months and waited to ramp up production before making the product generally available.

The window also gave OEMs time to complete their readiness, said Intel’s Charlie Wuischpard, vice president of the Data Center Group and general manager of High Performance Computing Platform Group, in a media pre-briefing. Those OEMs include the usual names: Cray, HPE, Lenovo, Dell and others.

The most distinguishing feature of the chip is that it’s a bootable host CPU — unlike its predecessor “Knights Corner,” which is a coprocessor that connects over PCIe. “We’re not just a specialized programming model,” said Intel’s General Manager, HPC Compute and Networking, Barry Davis in a hand-on technical demo held at ISC. “We’re the full IA programming model. There’s no PCIe bottleneck; there’s a limitation in the data that you can send back and forth from the host CPU to the accelerator or coprocessor and we removed that bottleneck.”

The “Knights Landing” Phi will be the first chip to offer an integrated fabric, Intel’s Omni-Path Architecture (OPA), in the package. “Knights Landing” also puts integrated on-package memory in a processor, which benefits memory bandwidth and overall application performance. A six-channel memory controller supports up to 384 GB of DDR4-2400 memory (~90GB/s sustained bandwidth). There are 36 PCI Express 3.0 lanes for connecting to PCIe coprocessors, PCIe SSDs or discrete graphics cards.

The second-generation Phi is based on an Intel Atom core (based on the Silvermont microarchitecture) with many HPC enhancements. The MIC (Many Integrated Cores) design fits 8 billion transistors on a die, using 14 nm process technology. The new Phi family introduces the AVX-512 instruction set, which will be available on future Xeon processors. Both the Phi and the Xeon are binary compatible and a benefit of this is that the optimizations that apply to one platform typically carry to the other, notes Intel.

Intel emphasized that the Phi is designed to run any workload, any IA code. “There are workloads out there that are single thread that maybe benefit from higher frequency and fewer cores and of course you would run those on a Xeon but it doesn’t mean those applications won’t still run on a Xeon Phi,” said Wuischpard. “Some of our early customers are implementing an entire supercomputing cluster with Xeon Phi. Others are doing a mix of Xeon and Xeon Phi and there are a lot of configurations that are possible within a given system deployment.”

As previously announced, the Phi product family comes in three variants: a PCIe coprocessor form factor; a stand-alone CPU; and a stand-alone CPU with integrated Omni-Path fabric technology. The SKU stack that Intel is launching includes four parts with different core counts, frequencies, TDPs and price points.

There are three parts shipping now: the 68-core 7250 (1.4 Ghz), the 64-core 7230 (1.3 Ghz) and the 64-core 7210 (1.3 Ghz). The TDP on all of these is 215 watts. The top-bin part – the Xeon Phi 7290 – is the promised 72-core version. The $6,250 SKU runs at 1.5 Ghz and consumes 245 watts of power; it will not be available until September. Integrated fabric versions of all four parts will not be available until October. Powering the fabric will add another 15 watts to the TDP envelope. The coprocessor card will be available in the second half the year, according to Intel.

“You can think of it as the 7200-series Xeon processor,” said Wuischpard, “You’ll see that all of the memory is 16 GBs across the board. We had originally talked about having a richer matrix of SKUs that ranged from no in-package memory to 16 GB of memory and then across these ranges of performance and it just looked too busy and too complex, and in the end everyone wants that in-package memory so we decided to shrink the SKU stack and make it easier to understand. And it does make it easier from a manufacturing perspective.”

The Xeon 7290 is a premium product with a premium price. This is by design since it’s relatively low-yielding, according to Intel. “Most of our early customers and this includes the large research labs and institutions have really focused on the 7230 and the 7250 to get the best price/performance. And we expect the 7210 will be the more general purpose high-running part,” said Wuishpard, adding that it offers 85-90 percent of the performance at less than half the price of the top-end part.

The self-hosted Phi processor competes directly with Tesla GPUs from Nvidia with both products targeting HPC and machine learning and visualization. At its GTC16 event, NVIDIA announced the NVLink-based Pascal GPU. The NVLink point-to-point interconnect’s advantage is enabling data sharing at rates five to 12 times faster than traditional PCI Express Gen 3.0. Currently, the NVLink-based P100 is only available to customers who shell out the $129,000 for NVIDIA’s “deep learning supercomputer,” the DGX-1, but the standalone NVLink-based P100 is expected to hit production availability early 2017.

Intel talks about scalability as being a big difference between a GPU card and Xeon Phi. “With GPU cards, you can only put so many cards in a box,” says Intel’s Barry Davis. “Even with NV-LINK to connect those together, you are still limited in that scale. As you look at the Xeon Phi product line with implementations at thousands of nodes, scalability is a key part of this architecture, and that’s what the market needs today, whether you are talking about machine learning, deep learning or traditional modeling and simulation.”

When it comes to artificial intelligence and deep learning, Intel has published several initial benchmarks claiming performance improvements over GPUs on a number of machine learning workloads.

NVIDIA’s VP, Solutions Architecture and Engineering, Marc Hamilton, said he questions the benchmarks that Intel has released so far, noting that the claims relating to deep learning were done against older versions of GPUs (Kepler) using unoptimized versions of frameworks. [The benchmark breakdown was unavailable on Intel’s site as of press time.] Hamilton also said that the “Knights Landing” does not have the strong node capability of the GPU. NVIDIA GPUs currently scale to 8-way configurations, but the OS will support 16 (recall the K80 has two physical GPUs inside it and the OS will support 8 of these).

There’s also a performance difference between the second-generation Phi and the newest Tesla GPUs. The top bin Knights Landing Phi CPU delivers 3.46 teraflops of double-precision floating point performance. The Pascal P100 GPU for NVLink-optimized servers offers 5.3 teraflops of double-precision floating point performance, and the PCIe version supports 4.7 teraflops of double-precision.

One early customer who has already deployed a Knights Landing Phi-based system is the Texas Advanced Computing Center (TACC) at the University of Austin at Texas. TACC got the 508 node system – an interim step between Stampede 1 and Stampede 2 – up and running and benchmarked on LINPACK three days after receiving its racks.

TACC Director Dan Stanzione wryly commented that that is not his preferred timeframe, but the result was a 117th place ranking on the latest TOP500 with a LINPACK of 817.8 teraflops. “Obviously the software came up pretty quickly in order to make that happen,” said Stanzione.

“We finished all of our benchmarking,” he continued, “and we’re putting users on it this week and are running our first tutorial on Sunday here at ISC.” The system employs the top-bin-minus-1 68-core Xeon Phi 7250 processor and the Omni-Path fabric.