What I Saw at the Enterprise IT Revolution: Radical Analytics Chic

If "a revolution is an idea that has found its bayonets" (Napoleon) then data analytics is the bayonet driving a revolution now underway in enterprise IT. It’s a revolution not only of technology but of the mind, impacting enterprise IT strategies and overturning its somewhat cautious mindset to the point where the IT worldview, at least where high-performance data (HPDA) analytics and hyperscale are concerned, has begun to overlap with the more aggressive approach found in technical computing.

But like the American Revolution, which has been called a “conservative revolution,” there are conservative elements – daunting ones – to the revolution in enterprise IT. This is because IT managers face two mandates in conflict: adopt a host of new HPC-class technologies emerging from the technical computing world that enable HPDA while also integrating those new technologies with existing, sometimes decades-old, legacy enterprise systems. This means embracing the high performance orientation of at-scale technical computing (read: burst capacity, scalability on demand) while maintaining customary enterprise IT standards for RAS (reliability, availability, serviceability) and minimizing costs (read: high utilization rates).

So if “the test of a first-rate intelligence is the ability to hold two opposed ideas in mind at the same time and still retain the ability to function*,” then first-rate intelligence is the order of the day in enterprise IT.

Addison Snell, CEO of industry analyst firm Intersect360 Research, presented insights on these developments in a webinar this week entitled "The New Rules of Enterprise IT," hosted by cluster management vendor Bright Computing.

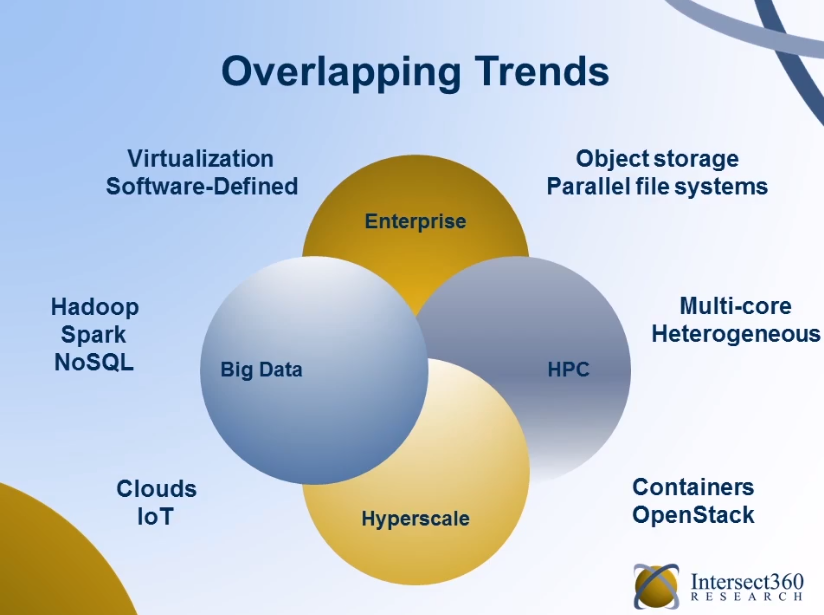

“It’s really put us in a situation where all of these different trends from all of these different segments of the market, big data and analytics, high performance computing, hyperscale, are all having significant influence into general enterprise IT,” said Snell. “These are now overlapping trends that touch and start to meet.”

“It’s really put us in a situation where all of these different trends from all of these different segments of the market, big data and analytics, high performance computing, hyperscale, are all having significant influence into general enterprise IT,” said Snell. “These are now overlapping trends that touch and start to meet.”

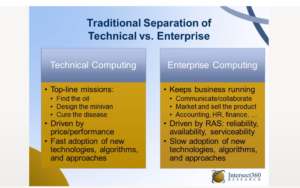

Snell cited a colleague’s (Chris Willard, Intersect360’s chief research officer) characterization of the traditionally segmented, contrasting outlooks of technical computing and enterprise IT:

“In technical computing, the watchwords are: ‘once you solve a problem it’s no longer interesting.’ You don’t need to solve the same problem twice. You move on to the next bridge, the next drug, the next car design. You’re constantly chasing a new level of problem, and that will remain true until some day we wake up and decide we’ve reached the end of science… Until then…there’s always a tougher scientific or tougher engineering problem to solve, and that’s what drives high performance computing.

“In enterprise computing, by contrast, the watchwords are: ‘once you’ve solved the problem, for heaven’s sake please don’t touch it. My payroll database is working, I really don’t want to rip it out and replace it, what I care about is that it works over time.’”

“It’s not that performance is unimportant (in business computing),” Snell said, “but it certainly is traditionally not the dominant purchase criterion. There’s not been a whole lot of motivation to get the payroll database to run 10 percent faster,” there’s more interest in it running reliably protecting that investment.”

The result, Snell said, is that “traditionally, technical computing has been marked by relatively faster adoption of new techniques, new technologies new algorithms, new ways of doing a problem, whereas enterprise computing has traditionally been more conservative, with slower adoption of all of those things.”

But that began to change rapidly two years ago, when big data began to take hold of enterprise IT.

Big data isn’t one particular application or a single event in enterprise IT. It’s “really a set of industry trends where organizations…are having more data, more access to data, more types of data and the challenge in front of them is being able to manage that data and gain critical insights from that data. And that starts to stress their organizational capabilities, and this is pandemic across all segments of IT: the overload” is happening in both technical and business computing areas.

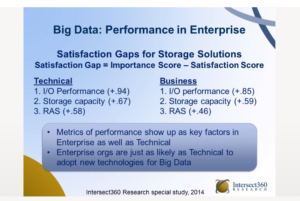

Snell said Intersect 360 studies of enterprise IT and technical computing end users reveal the mindset shift happening in enterprise IT.

Snell said Intersect 360 studies of enterprise IT and technical computing end users reveal the mindset shift happening in enterprise IT.

“What we found, remarkably, was that, the responses were very similar,” Snell said, particularly when measuring “satisfaction gaps,” which assesses the difference between technologies and solutions that are high in importance but low in satisfaction.

“Metrics of IO performance wound up being the number one satisfaction gap for technical users, for business users, for commercial, for academic, government, large, medium, small – every different type of workload we surveyed, it all came down to these metrics of IO performance and scalability.

“Metrics of IO performance wound up being the number one satisfaction gap for technical users, for business users, for commercial, for academic, government, large, medium, small – every different type of workload we surveyed, it all came down to these metrics of IO performance and scalability.

“Whether they were in technical computing or not, when you have large scale big data analytics, it introduced a new type of workload into the enterprise wherein the enterprise computing problem took on more of a technical computing mindset,” Snell said, “where you’re looking at metrics where scalability and performance are the top criteria, you’re showing the same willingness as a technical computing respondent to look at new techniques, new technologies... This is the first time we really started seeing this mindset getting driven from technical computing into enterprise computing for a category of problem.”

A similar phenomenon is happening in hyperscale, formerly called “ultrascale internet,” which Snell said his company has been tracking since 2007.

“But starting in 2014, two years ago, it became evident to us that what’s known as the hyperscale market had grown and evolved to where it was really its own segment of the IT industry,” he said. It transcends beyond that top tier of famous hyperscalers - Google, Facebook, eBay, Amazon – that spend more than a billion dollars a year on IT infrastructure. It also includes next-tier companies such as Netflix, Pinterest, Wikipedia and Accuweather, “that have to maintain web facing application infrastructures that are arbitrarily scalable. And they wind up being influenced by all of these same trends.”

The upshot: the top tier users , at a supercomputer national lab on the tech computing side, or a tier-one hyperscaler, “the technological choices they make, particularly in software, start bubbling through the rest of the industry.”

An offshoot of these convulsions is what Snell calls the “New Rules of Enterprise IT,” which Snell identifies as:

- Cost Containment vs. Burst Capacity: The ingrained enterprise value of running a tight IT ship at maximum ROI is running up against the more free-wheeling world of technical computing, which values speeds and feeds and is more willing to pay for it, in support of analytics workloads.

“The fundamental underpinnings of enterprise IT are still there,” Snell said. “What these trends have done is a layering on of these new requirements that become part of the IT landscape. In some senses they can be contradictory almost to the way you think about running enterprise IT in your organization, starting with low cost. …that’s achieved a lot of the time through high utilization, you want to virtualize things so you spread out all the cycles so you run at a really high utilization rate, you’re not buying any excess capacity… But at the same time you have to build in elastic capacity for these new types of (analytics) workloads.”

More capacity can be added through the use of public clouds, hybrid clouds and containers, but this adds cost. “It’s a new rule for enterprise IT. You can’t just say I’m going to do everything the cheapest way I can at the highest utilization and then I’m done. You need to additionally answer the question: How do you burst to elastic capacity and capability as needed?”

- Security and Sprawl: Uncompromised security remains a critical underpinning of traditional enterprise IT. But in a world in which enterprise IT has spread far beyond the four walls of traditional on-premises IT, this is an increasingly difficult challenge.“Everything is even more connected now than it’s been, with mobile, with IoT, with cloud, with increased collaboration. You’re trying to use IT as a tool to link all of these things, but at the same time you can’t give away anything on the security side. So it’s an additional rule, an additional level of complexity.”

- When Old and New Worlds Collide: A corollary of the first rule, IT managers must, as mentioned, merge new and old world workload orders so that the value and insights generated by big data and analytics applications, and the technologies that support them at scale, cohabitate with existing, older systems – payroll, personnel, ERP, CRM, etc.“It’s not acceptable to say, ‘I’ve added some new function and then something broke.’ You can’t do that in enterprise IT. You have to protect your legacy workloads as you have them, but at the same time you’re trying to bring on these new (analytics) capabilities, which in many cases means new technologies it means new approaches, new software, new middleware, new ways of doing business. And to do that in a way that protects your initial investments can be quite a challenge.”

Snell discussed an important adjunct to the new rules: organizations are embracing a wider variety of processor architectures (GPUs, FPGAs, ARM, etc.) based on their unique analytics requirements.

“We’ve been in an architectural era when everything was similar,” he said. “X86 clusters were x86 clusters and people ran similar sets of tools, and a good way to know you were on the right track was that you were doing the same thing everyone else was doing.

“Now we’re moving toward an era of specialization again, and it’s worth thinking about how what you’re doing is different from what other people are doing. Yes, you want be influenced by these trends, but you don’t want to do everything exactly the same as everybody else, either. You need to pick and choose the appropriate tools and capabilities for your environment, what makes sense for your own special workloads.

Many of these convulsions in enterprise IT fall on the shoulders of IT administrators, who may look for help in embracing a more technical computing mindset – and skillset.

“The skills gap is the biggest part of this challenge,” Snell said. “If you’re an IT administrator, over the course of a 40-year career you may find that as technology evolves you might have to completely refresh your skillset every 10 years… Now we’re changing the rules again where the architecture itself is starting to differentiate and you’re bringing in things like cloud utility computing while you’re adding new workloads at the same time. So I’m afraid to say that it’s refresh time again.”