Nvidia’s Mammoth Volta GPU Aims High for AI, HPC

At Nvidia’s GPU Technology Conference (GTC17) in San Jose, Calif., this morning, CEO Jensen Huang announced the company’s much-anticipated Volta architecture and flagship high-end GPU, the Tesla V100, noting that it took several thousand engineers several years to create, at an approximate development cost of $3 billion.

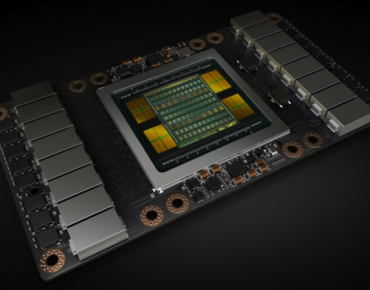

One thing is undeniable about the Volta V100: it is a giant chip, 33 percent larger than the Pascal P100 and once again “the biggest GPU ever made.” Fabricated by TSMC on a custom 12-nm FFN high performance manufacturing process, the V100 GPU squeezes 21.1 billion transistors and almost 100 billion via connectors on a die size of 815 mm2, about the size of the Apple watch, said Huang.

“It is at the limits of photolithography,” Huang told the crowd. “You can’t make a chip any bigger than this because transistors would fall on the ground. Every single transistor that is possible to make by today’s physics was crammed into this processor.”

“It is at the limits of photolithography,” Huang told the crowd. “You can’t make a chip any bigger than this because transistors would fall on the ground. Every single transistor that is possible to make by today’s physics was crammed into this processor.”

“To make one chip work per 12-inch wafer, I would characterize as unlikely,” added the CEO. “And so the fact that this was manufactured was a great feat.”

This is a domain specific chip, said Jonah Alben, senior vice president of GPU engineering at Nvidia. “This chip can run games very well if we want it to, but the focus [of the V100] is to be a great chip for AI and for HPC, so we dedicated all the resources we could until it was illegal to do more.”

“The first thing to know about Volta is it a giant leap for machine learning,” Luke Durant, principal engineer, CUDA Software, Nvidia followed. “[However,] we still are completely focused on high-performance computing. Across the board we’re seeing about a 1.5x speedup as compared to Pascal, just one year ago.”

Volta is a major launch for Nvidia, but not exactly a surprise. Back in 2014, the architecture was tapped to power the next-generation CORAL supercomputers, Summit and Sierra, in partnership with IBM, Mellanox and the Department of Energy. Those computers, expected to reach at least 200 petaflops of performance, are now due to be installed later this year into early 2018.

The new V100 touts spec’d performance of 7.5 teraflops double-precision, 15 teraflops single-precision, and 30 teraflops half-precision. This is nearly a 42 percent increase in peak flops over one year.

The Volta architecture introduces a brand new type of processor, Tensor Core, designed to accelerate AI workloads. With 640 Tensor Cores (8 per SM), V100 delivers 120 teraflops of deep learning performance, providing up to 12x higher peak teraflops for Tensor operations compared with previous-generation silicon.

Volta is also slated to provide up to 60 tera-ops of INT8 performance. Nvidia kept the INT8 instructions to maintain compatibility with existing code bases and also reported that having a dedicated integer unit on Volta would help write machine learning kernels.

“With the V100, the most important statement isn’t the raw performance, although Nvidia managed to raise eyebrows with that,” commented Intersect360 CEO Addison Snell. “It’s that they are designing chips for double-precision 64-bit performance, single-precision 32-bit performance, or tensor performance, in the same package, so a single processor targets a range of applications in AI and HPC.”

Volta comes with 6MB of L2 cache and 16GB of HBM2 memory, providing 900 GB/s of bandwidth. The SMX2 form factor V100 features NVLink2 connectivity with nearly twice the throughput of the prior generation NVLink, going from 160 GB/s to 300 GB/s. Designers accomplished this by adding 50 percent more links and running them 28 percent faster.

Similar to the Pascal GP100, the Volta GV100 SM incorporates 64 FP32 cores and 32 FP64 cores per streaming multiprocessor (SM), however the new GPU has 80 SMs compared with 56 on the GP100. It thus has many more registers and supports more threads, warps, and thread blocks compared with previous Tesla generation GPUs, according to Nvidia.

Major features of the Volta SM include:

+ New mixed-precision FP16/FP32 Tensor Cores purpose-built for deep learning matrix arithmetic.

+ Enhanced L1 data cache for higher performance and lower latency.

+ Streamlined instruction set for simpler decoding and reduced instruction latencies.

+ Higher clocks and higher power efficiency.

“It has a completely different instruction set than Pascal,” remarked Bryan Catanzaro, vice president, Applied Deep Learning Research at Nvidia. “It’s fundamentally extremely different. Volta is not Pascal with Tensor Core thrown onto it – it’s a completely different processor.”

Catanzaro, who returned to Nvidia from Baidu six months ago, emphasized how the architectural changes wrought greater flexibility and power efficiency.

“It’s worth noting that Volta has the biggest change to the GPU threading model basically since I can remember and I’ve been programming GPUs for a while,” he said. “With Volta we can actually have forward progress guarantees for threads inside the same warp even if they need to synchronize, which we have never been able to do before. This is going to enable a lot more interesting algorithms to be written using the GPU, so a lot of code that you just couldn’t write before because it potentially would hang the GPU based on that thread scheduling model is now possible. I’m pretty excited about that, especially for some sparser kinds of data analytics workloads there’s a lot of use cases where we want to be collaborating between threads in more complicated ways and Volta has a thread scheduler can accommodate that.

“It’s actually pretty remarkable to me that we were able to get more flexibility and better performance-per-watt. Because I was really concerned when I heard that they were going to change the Volta thread scheduler that it was going to give up performance-per-watt, because the reason that the old one wasn’t as flexible is you get a lot of energy efficiency by ganging up threads together and having the capability to let the threads be more independent then makes me worried that performance-per-watt is going to be worse, but actually it got better, so that’s pretty exciting.”

Added Alben: “This was done through a combination of process and architectural changes but primarily architecture. This was a very significant rewrite of the processor architecture. The Tensor Core part is obviously very [significant] but even if you look at FP32 and FP64, we’re talking about 50 percent more performance in the same power budget as where we’re at with Pascal. Every few years, we say, hey we discovered something really cool. We basically discovered a new architectural approach we could pursue that unlocks even more power efficiency than we had previously. The Volta SM is a really ambitious design; there’s a lot of different elements in there, obviously Tensor Core is one part, but the architectural power efficiency is a big part of this design.”

Nvidia showed off three different V100 form factors at GTC: the 300 watt SXM2 (mezzanine) module; an inferencing accelerator for hyperscale that is a 150 watt full height, half length (FHHL) PCIe card about the size of a CD case; and the standard PCIe two-slot, full-length card.

V100 GPUs will be available starting next quarter, according to Nvidia. Customers can pre-order the Volta-series DGX-1 box now for $149,000, $20,000 more than the list price for the Pascal-equipped version.

In addition to the coming DGX-1 Volta refresh, Nvidia also released the new DGX Station. Billed as a “personal supercomputer for AI development,” DGX Station provides four NVLink-connected Tesla V100s to deliver 480 (peak) Tensor teraflops in a 1,500 watt water-cooled chassis for $69,000.

Riding the wave of AI and HPC announcements made this week and on the heels of a stronger-than-expected first quarter (recording revenue of $1.94 billion with record datacenter sales of $409 million), Nvidia shares were up 18 percent as of close of market Wednesday, reaching $121.29, an all-time high.

Related

With over a decade’s experience covering the HPC space, Tiffany Trader is one of the preeminent voices reporting on advanced scale computing today.