HPE’s Memory-centric The Machine Coming into View, Opens ARMs to 3rd-party Developers

Announced three years ago, HPE’s The Machine is said to be the largest R&D program in the venerable company’s history, one that could be progressing toward the epic grandeur envisioned by HP (then, HPE now) starting in 2014. Certainly, senior HPE managers have high ambitions for the new architecture: nothing less than a new paradigm, called Memory-Driven Computing (MDC), that puts memory, not processing, at the center of the computing platform.

HPE positions The Machine as the architecture for exascale-class performance by the time it’s commercially available in 2019 or 2020, which is roughly the timeframe the Department of U.S. Energy's Exascale Computing Project has established for delivering an exascale machine. Along with completion of the new platform, HPE hopes will come a broad ecosystem of complementary development. The prototype unveiled today contains an oceanic 160 terabytes (TB) of memory, capable (according to HPE) of simultaneously working with the data held in every book in the Library of Congress five times over – or approximately 160 million books.

“It has never been possible to hold and manipulate whole data sets of this size in a single-memory system, and this is just a glimpse of the immense potential of Memory-Driven Computing,” the company said in its announcement.

For all the promise of The Machine, today's announcement is not startling. HPE has regularly issued updates on The Machine’s development, most recently last November, when the company said it had successfully demonstrated an MDC proof-of-concept prototype. Today’s news: the prototype operates at scale, Kirk Bresniker, Fellow/VP and chief architect of Hewlett Packard Labs, told EnterpriseTech.

“We wanted to build a system big enough to hold really interesting problems in a way that had never been done before,” Bresniker said. “So we somewhat arbitrarily picked a scale – 160 TBs of memory on a memory fabric. Compare that to the paltry 2 GBs of memory on a typical laptop, that’s 80,000 times bigger. No one’s ever constructed a memory system that large before.”

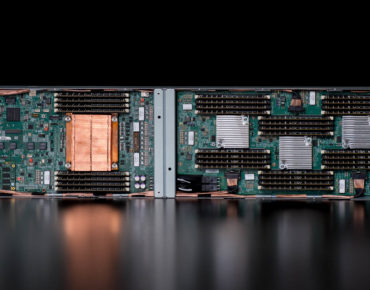

Regarding computation power, the prototype has an optimized Linux-based operating system (OS) running across 40, 32-core ThunderX2, Cavium’s flagship second generation dual socket-capable ARMv8-A workload optimized System on a Chip.

In addition, The Machine has a Photonics/Optical communication links, including the new X1 photonics module, which HPE said are online and operational. And it has software programming tools designed to take advantage of abundant persistent memory.

Bresniker said Memory-Driven Computing has great potential because the architecture curtails so much of the data movement required for traditional computing.

“Rather than have a GPU hanging off of a PCI Express link - and you have to manage the data back and forth from the general purpose processor out to the GPU and back again - because I have a memory fabric that has an open interface I can place those acceleration resources directly, in direct communications, on the memory fabric,” he said.

Today’s announcement marks a transition beyond internal proof-of-concept.

“We’ve moved on from proving out that each individual piece is working,” said Bresniker, “to the point where now…we can do the handoff from the teams working on the hardware, the firmware, the operating system, to the application development teams, to begin to flex their minds and muscle around the ramifications for having this kind of a platform available to them for the first time.”

From the start of this project, Bresniker said, HPE has taken the somewhat unconventional and risky approach of sharing information about the new platform so that third parties can do their development work based on The Machine specifications.

“We always knew this had to be bigger than us, that this is a conversation that has to happen across the industry,” he said. “That’s why we started to have the communications so early. When we announced this in 2014, the prototype we’re showing off now was essentially a block diagram scrolled on my white board here in Palo Alto. But we wanted to have the conversation early because we wanted to work with the open source development communities, we needed to engage with them, we needed to engage with our software partners that we’ve traditionally had. We needed to engage with our component supply chain, all the memory, communications and computation components that need to understand how they fit into this memory fabric.”

Based on the current prototype, HPE said it expects the architecture could scale to an exabyte-scale single-memory system and, beyond that, to a nearly-limitless pool of memory – 4,096 yottabytes. For “context, that is 250,000 times the entire digital universe today,” HPE said in its announcement. “With that amount of memory, it will be possible to simultaneously work with every digital health record of every person on earth; every piece of data from Facebook; every trip of Google’s autonomous vehicles; and every data set from space exploration all at the same time – getting to answers and uncovering new opportunities at unprecedented speeds.”

“Cavium shares HPE’s vision for Memory-Driven Computing and is proud to collaborate with HPE on The Machine program,” said Syed Ali, president and CEO of Cavium Inc. ”HPE’s groundbreaking innovations in Memory-Driven Computing will enable a new compute paradigm for a variety of applications, including the next generation data center, cloud and high performance computing.”