GPU Database Early Adopter Sees Oil & Gas Queries Drop to 100 Milliseconds

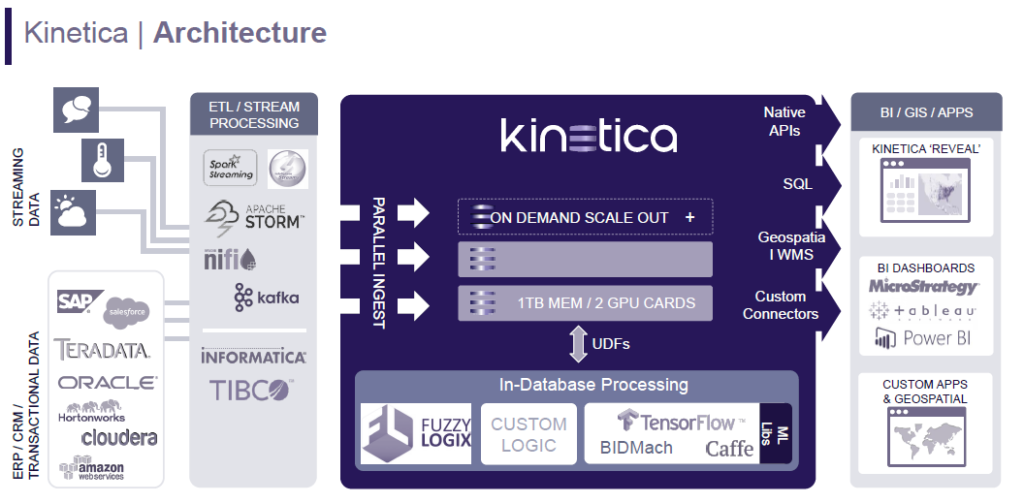

System imbalance – in which processing power, memory capacity and data movement are in a state of disequilibrium – customarily has meant that the processor sits idle waiting for data to be shipped to it. Imbalance continues today, but with the advent of open source tools, such as Apache Kafka and Storm enabling real-time data streaming and rapid data ingest, the problem is reversed: processors can’t keep up with avalanche of data.

“We hear it all the time from enterprise architects and CTOs,” said Eric Mizell, VP global solutions at Kintetica, a GPU-accelerated database specialist, ‘I can’t throw another CPU at the problem, we have server sprawl. I need denser compute, I need more (processing) from the box than what we’re getting today.’”

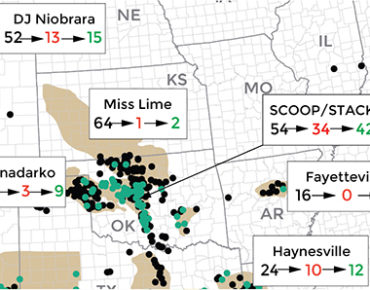

That was the problem faced by Ian Huynh, CTO of RS Energy Group (RSEG), which sells geoinformation and analysis – “energy intelligence” – to oil and gas companies and to investors. RSEG takes large quantities of public data, cleanses and harmonizes it, and then overlays it with its own petro-engineering knowledge in preparation for analytics by its customers. Among its products is RSEG Prism, which allows users to visualize and investigate geoinformation on millions of wells and production data across North America.

About a year ago, RSEG faced a performance shortfall: as its database grew, query responses decelerated. RSEG geospatial queries are highly compute intensive, encompassing 4 million wellheads across the U.S. and Canada and enabling customers to map the locations, zoom in/zoom out of basins, to tag a set of wells and examine geoinformation, such as location, vertical depth, subsurface characteristics and so forth.

“We used traditional relational technologies,” Huynh told EnterpriseTech, “but there was a real slowness, an inability to ingest data quickly.” Of particular value to RSEG customers, Huynh said, is giving customers rapid iterations of geospatial visualization.

After investigating a number of options, RSEG faced a quandry: more (literally) of the same or a GPU database scheme, which at that time was a road less traveled.

“There were two directions we could go in,” Huynh said, “one was traditional Hadoop scale-out and build cheap hardware on top of that and go as fast as we can. We looked at GPU as something for the future, but at that time we felt there might be quite a bit of risk because it was so new.

“But looking at it, from last year to this year, in literally less than 12 months, we’ve seen adoption of GPU has gone from bleeding edge to almost everyone’s talking about it today,” he said. “We’ve felt we were one of the companies that took the risk and adopted it much earlier than others, and we’re glad to see it’s paid off for us handsomely.”

Query response times, that had been between 10 seconds to two minutes per query with their old system, have dropped to less than 100 milliseconds with Kinetica, which means customers (Huynh says there are currently about 1,000 end users) can interact rapidly with RSEG data, make more queries and generate more insight from screen renderings.

“That’s a critical success factor for us,” Huynh said, “a totally different experience.”

As of now, Huynh said, the RSEG database is a little less than a terabyte in size, but he expects that to grow significantly, which would have posed major performance problems had the company not adopted a GPU database.

“We’re not big data today,” he said, “but a reason we outgrew the traditional CPU-based stack, the Hadoop stack, and that we’re moving into GPU analytics is the ability for us to scale up into petabyte class, into thousands of terabytes scale, without having to add hundreds of nodes clusters, that kind of Hadoop-style process, to consume and process that data. For about a terabyte of data today we can easily consume that in a couple of Kinetica nodes. From a hardware/software standpoint, it’s a fraction of the cost you’d pay out to run a traditional 20-node to 40-node cluster in Hadoop.”

Kinetica began life in 2009 as GIS Federal, building geospatial software for the U.S. Army for tracking persons and things of interest, such as troop movements and equipment, in real time. According to Mizell, the company founders ran into the same trouble that Huynh did: slow response due to the inability to ingest and query data at the same time. “So our CTO (and co-founder Nima Negahban), who had a GPU background, said, ‘Why can’t we build a database on a GPU?’, which has thousands of cores, vs a CPU, which has 64 cores tops.”

The GPU-accelerated database prototype was successfully tested and adopted by the Army, and the company, under the name GPUdb, took its technology out to the commercial markets. Last year, the company rebranded itself again as Kinetica, and this year it completed an investment round that raised total venture investment to more than $60 million. Customers include the U.S. Postal Service and Glaxo Smith Kline.

Mizell said a feature of Kinetica is that it’s built around commonly used technologies, such as SQL92 and Kafka, familiar to database users.

“We’re ingesting with those tools that everyone’s using, they plug straight into Kinetica with no effort, we can ingest the data at the speed they can consume it, no one else can do that, and also do real time queries and real time aggregations with SQL92,” Mizell said. “You can ingest with CPUs and query with GPUs at the same time, thus giving you a real time view of your data.”

Kinetica announced the newest release of its GPU database at the recent Tableau conference that includes, according to the company, a new OpenGL Framework for accelerated geospatial capabilities and enterprise-grade features. In particular, polygon and line geometries with high vertex counts, scatter plots and complex graphs or charts can realize up to a 100x improvement, according to the company.

“Enterprises have spent the last decade deploying systems to store and manage massive data sets,” said 451 Research’s senior analyst, data platforms and analytics, James Curtis. “Now these enterprises are looking to extract business value from those data sets at real-time speeds and at an affordable price point, which is precisely where Kinetica’s latest GPU database release takes aim.”