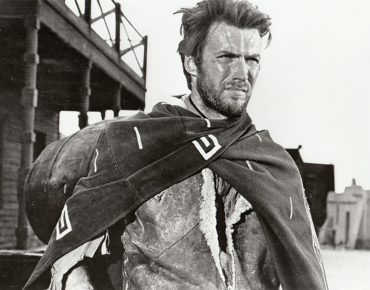

The Good, Bad & Ugly of Public Clouds: Plenty of Each

Source: By movie studio - eBay, Public Domain, https://commons.wikimedia.org/w/index.php?curid=25888150

Anything as immense, impactful and powerful as public cloud computing is bound to be variegated, encompassing the good, the bad and the ugly. As AWS, Azure, Google Cloud, IBM Cloud and the other platforms gain more market adoption – usually in tandem with an on-prem data center – the benefits and landmines of cloud computing for compute- and data-intensive applications are becoming better understood.

The good and the bad extend across technology, integration, cost control and change management challenges:

How do know which workloads and data should be put in the cloud or kept on-prem? How can we build a comparative on-prem / off-prem business case that takes all costs into account? How do we ensure data security and regulatory compliance with our cloud workloads? How do we help our staff handle the wrenching change of integrating cloud computing within our IT infrastructure? How do we incorporate best practices that maximize our use of the cloud? Should our cloud strategy replicate our data center, or be something different?

These and other issues were discussed by a panel of cloud technologists at Tabor Communication’s recent Advanced Scale Forum in Austin, moderated by Merle Giles (former industrial lead at the National Center for Supercomputing Applications at the University of Illinois and founder of the advisory firm Moonshot Research).

The Good

The public cloud advantage most commonly highlighted by the panelists is its flexibility in an enterprise compute world that increasingly values experimentation, the spinning up and tearing down of instances for compute- and data-intensive applications, along with instant scaling up additional compute when workloads burst beyond the capacity of on-prem resources.

“The good news about hyperscaler public clouds,” said Ari Berman, vice president and GM of consulting services at The BioTeam, which offers HPC and IT guidance for life sciences organization, “is it really does enable the type of workloads and expandability that organizations need on a temporary basis. You don’t have to go build a 100,000-core machine to do your one week of data crunching. That’s one of the most powerful things about it.

“As long as you have a credit card,” he said, “you can swipe and go to one of the clouds, spin up a couple of hundred thousand cores, go for a week, cry a little at the cost, but get the thing done and spin it down.”

Barbara Eckman, Ph.D., principal data architect, Comcast, expanded on Berman’s comment, saying “you can spin up and use them to do some machine learning or reporting, and then shut them down and you don’t have to pay for them being up 24/7.”

For Steve Hebert, CEO of cloud HPC services provider Nimbix, a key benefit of public clouds is their ability to deliver true supercomputing-class power, something doubted by many when Hebert’s company came on the scene in 2010.

At that time, “if you were doing grid-based computing or loosely-coupled types of workloads, you could definitely scale out,” on a public cloud platform, Hebert said. “But for the real, critical applications that required either computation acceleration or very fast low latency fat network fabrics in the cloud, that was something that was…simply not possible. The good news is now that it’s very much possible and the cloud providers across the globe are investing heavily in these advanced technologies to support not just the initial web services side of IT workloads but also these advanced, data intensive types of workloads.”

According to Dan Powers, managing director at technology consulting firm Accenture, a great advantage of public clouds are the range of technologies they offer and their ability to remain more current with new technologies than is typically possible with on-premises data centers.

“The good that I’ve seen is the options that we all have today from a strategy perspective,” Powers said. “There are more technologies you can start using internally to get better efficiencies at scale and save costs on your own on-prem environment that can be coupled in with offerings in industry around hybrid cloud… On the public cloud side, we always think of IaaS, but there are amazing choices for PaaS and SaaS on the public cloud as well.

"Competition among the public cloud providers also is driving much innovation and a wider variety of solutions, Powers said.

“You may not see it behind the scenes but it is driving tremendous competition and dropping of costs and price,” he said. “Customers look at different options for a hybrid compute strategy, and people will ask what should we do with our applications, should we migrate to the cloud, should we keep them on-prem, should we think about private cloud…? Competition is one thing, but they are also changing their underlying hardware much quicker than one could do on-prem – so even during a five-year horizon, you see huge price-performance increases.”

“You may not see it behind the scenes but it is driving tremendous competition and dropping of costs and price,” he said. “Customers look at different options for a hybrid compute strategy, and people will ask what should we do with our applications, should we migrate to the cloud, should we keep them on-prem, should we think about private cloud…? Competition is one thing, but they are also changing their underlying hardware much quicker than one could do on-prem – so even during a five-year horizon, you see huge price-performance increases.”

This particularly applies to companies with advanced analytics strategies, Powers said. “The technology out there for big data and machine learning is unbelievable, it is way better than you can do on-prem unless you have an unlimited budget, unlimited time and top professionals.”

Having said that, Powers also pointed at the “it’s still early days – only 5 percent of enterprise workloads are actually sitting in cloud at this time. But the time to take advantage of the cloud is now.”

Rick Friedman, principal program manager for Microsoft Azure (who came to Microsoft when it acquired HPC cloud specialist Cycle Computing last year) said a positive cloud development is the increasing cloud-savviness of enterprises.

“On the good side has been the recognition that cloud is not the answer, it’s an answer,” he said. “The other piece…I see in cloud computing, particularly in HPC-driven and large computation cloud computing, is that it’s driven by the commercial side of the business, as opposed to the academic side of the business. Historically, a lot of the advances in the models, methodologies and best practices we’ve seen have all come out of the academic communities. And I think what I see from my world at Cycle and Microsoft is that the commercial aspects and commercial entities are driving evolution of cloud and its usage.”

The Bad

Moving over to cloud’s bad side, Berman discussed the “lack of understanding of how to use the cloud, how to use it effectively.”

The cloud should not be treated as a replica of the on-prem data center, he said, “it should be treated as a scriptable orchestratable architecture that can expand and contract as you need. If you utilize it correctly it’s a great place for long-term storage for very large data, especially when you have lots of organizations computing on the data or using those data in interesting fashions. So large public data sets, reference data for life science, those sorts of things, are really good to sit in the cloud, especially when they’re 10 PBytes in size, rather than having to download all that data through a 100 MByte connection (and your IT department won’t spring for something bigger).”

Another problematic area for the cloud: cost management.

“We’ve had lots of our customers who just said, ‘I want go all cloud, I don’t want to have any data centers anymore,’” Berman said. “And we helped them do that while telling them: ‘Don’t do that.’ But they did it anyway. And then five years later, a lot of them had this cloud sobriety moment: they say, ‘That was awesome, but we need to go back to a hybrid infrastructure.’

“I think that’s one of the hardest things, is the lack of understanding of what cloud can do for you and what it can’t,” he said. “I think serverless stuff is really awesome, and every cloud provider has some version of those services. And if you’re going multi-cloud, people think you’re going to do the same in all the clouds and get resiliency that way. Um, don’t do that. Use them for what they’re good for, they all have different service sets.”

Eckman also raised the problem of cloud costs.

“They get you in cheap, but hit you when you scale up, and it gets expensive really fast” she said.

Contributing to this problem: arcane pricing rules.

“You can think you’ve planned a really thrifty approach, but it can blow up in your face,” she said. “There’s a difference between capex and opex. When are finance people going to learn the money that you put into a cloud should come from the capex budget, at least some of it, because you are putting it into the cloud instead of buying big hardware for the data center. That’s a major problem because of these huge opex budgets that are much bigger than they should be, and you’re not using much capex at all. I find that enraging and really silly.”

For Hebert, a bad aspect of the cloud is complexity.

“The rise in the volume and number of services across all sorts of different IT solutions – it can be very complex, and that’s where some of the cost comes in as you try to manage complexity,” Hebert said.

He also said that in accounting for the cost of cloud, it’s important for users to calculate the value of insights derived. “It’s the cost per answer that they get, not just the cost for compute and the infrastructure, but the business value that they get. How do we translate from measuring server hours and how many terabytes and petabytes you have stored, and start looking at the business value I’m taking away, instead of this traditional on-prem / off-prem type of debate.”

Powers said transitioning to cloud within the existing IT infrastructure can present a major challenge, and success in this area requires strong senior leadership.”

“When I think about the bad, it’s not just about cloud, it’s any technology change we’ve seen,” he said. “There’s got to be a top senior executive level business case for the organization, at the CIO-CFO level. And it needs to be bottom-up.”

Powers said many managers have told him their models show that they can operate 20 to 30 percent less expensively on-premises than in the cloud. He then asks to see their business case, which often fails to account for on-prem power, real estate and systems management costs.

“The organizations that I’ve seen be most successful around cloud and the change to cloud is (when) the CFO and the CIO have a program in place that’s based on a business case for the savings you’re going to see across your organization. The finance people are not IT people, and the IT people are not finance. But if you get them together…and you (figure out) how much it costs to run a server on a minute-by-minute basis and how much your storage is truly costing, then you can compare it against anything.

“You can take that business case and give an RFP to all the cloud providers and ask them to ‘beat this,’” Powers said. “You’ve to go for it, you got to drive the organization to want to do that change and build that business case. That will remove the bad and get you into these pretty cool technology areas and take advantage of them.”

Friedman discussed the problem that some organizations encounter when moving their HPC-class compute- and data-intensive workloads, which over time can become a small world unto themselves, to the cloud.

“Historically, HPC large computation, even the data and AI pieces of it, all of that often came from what’s called the product side of business,” Friedman said, “and they have their own perspectives, revenue goals, and so forth. Then you have this function called IT. And in many organizations, where that product group has purchased an HPC system and over time built a beautiful brick wall around that resource, the problem is when you go to the cloud and you have to work with those people and they provide value, they really do, but now you have to get outside of that wall… Democratization…doesn’t work unless there are rules – one of the beauties of having walled garden is (there are) no rules. I bring this up because people don’t understand this, that’s why costs can get out of line.”

The Ugly

Lawyers tend to be unpopular, but Friedman recommended that companies moving toward the cloud for advanced scale workloads should bring in legal counsel.

“Do not underestimate the impact of lawyers on your project,” he said. “There aren’t industry-accepted best practices on everything… For every company I deal with the questions of data protection and data privacy. Bring them (lawyers) into your projects as soon as possible.”

For Hebert, deep integrations of cloud workloads with existing IT architectures and existing IT processes or data governance processes can be very ugly.

“Munging that into a cloud experience in some way, that can be very difficult,” Hebert said, “and it can really slow down the process. Software licensing is a good example because that’s where sometimes IT plumbing needs cloud plumbing, and how do you get that together in a way that’s secure and is compliant with existing IT and security policy?”

The problems are solvable, he said, “you have to start with aligning strongly on the expectations and then how those get papered up. But I think there is great progress being made on all those fronts.”

For Powers, cloud ugliness is – as mentioned above – organizations that try to directly transfer and replicate their old IT set-up in a public cloud.

“I’ve seen so many white boards (with the client saying) ‘this is what we’ve got, now give me some of this on the cloud,’” he said. “And we’re taking those exact architectural approaches and applying that directly to the cloud when there’s drastically different ways of doing things in the cloud if you would take a new sheet of paper and go the other way and say ‘what can we do?’”

He said only about 10 percent of clients take a fresh perspective on optimizing the cloud.

“One client in the financial industry took their massive application for the mainframe that they got ported to distributed systems through tiered architecture, and spent three months going through course after course after course with the cloud providers,” he said. “And then they got in a room and asked, ‘How can we do things differently? How can we take advantage of a database that can handle petabyte data sets with trillions of rows and columns? If you have that, and you know it, and you understand it, and you can apply it to the problem, they’re going to be able to search and re-build this application in a fraction of the time.

“So the ugly is the architectures we’ve had in the past and just trying to make them run in the cloud. But if you take a step back it can be pretty beautiful.”

Berman offered up three ugly cloud phenomena.

Too many organizations, he said, neglect to put in place adequate connectivity to the cloud.

“Networking is a fundamental need if you’re going to go to any architecture outside of your data center for any data intensive applications, period. One hundred megabytes and a couple of T1’s just isn’t going to work. We’ve had customers who go to their cable companies and get a cable modem in their data center because it’s faster than what their own org provides.”

Next, Berman brought up cloud closed mindedness. “There’s an enormous amount of dogma around what the cloud is, it’s almost like a belief structure at this point rather than a rational set of architectures that people can use.”

In healthcare and life sciences, he said, the prevailing dogma tends to either condemn or too enthusiastically embrace cloud. “It swings to the two extremes, not the middle where rational thought exists. The thinking is either ‘we have to do it all in the cloud, this is awesome’; or ‘this is terrible, we’re going to lose our collective heads doing this.’

For example, Berman said, data security regulations, such as GDPR and HIPAA, convince some organizations that a public cloud can’t be used. “But the truth is, if you architect it correctly, like anything, the cloud’s probably more secure than anything you can build yourself.”

A third cloud factor to be wary of, Berman said, is sales and marketing hype that results in disappointing results. “When people are trying to go to the cloud, the sales and marketing department is doing a great job of selling but not of demystifying. The end result is people go in with a set of expectations and come out having been dragged through the mud.”