Supercomputer-Powered Enterprise AI Platform from NetApp and Nvidia

Bestowing the blessings of AI, machine learning and deep learning upon more than the 1 percent means easing entry into the mysteries of AI. This requires new combinations of technologies with capabilities otherwise possessed only by data scientists, those AI-capable unicorns worth their body weights in gold and who are so often lured by bags of gold from the FANG companies and hyperscalers, to the exclusion of the bottom 99 percent. The idea with many new AI solutions is to leapfrog and truncate the agonies of AI initiatives for organizations that have normally intelligent IT staffs, not abnormally.

NetApp and Nvidia, two hot-growth Wall Street darlings, have partnered on such an offering, called NetApp Ontap AI, that combines NetApp’s hybrid cloud data services and AFF A800 cloud-connected all-flash storage with Nvidia’s GPU-powered DGX supercomputers.

The offering also includes NetApp Data Fabric, a data management technology designed to create an edge-core-cloud data pipeline that integrates diverse data sources and attacks the challenges of controlling distributed data stores so that current and accessible data is available for AI projects.

“Unfortunately, many organizations still underestimate how much AI depends on an ability to marshal and manage vast quantities of data,” said Octavian Tanase, SVP of NetApp’s Data ONTAP operating system group, in a blog. “…Ontap AI lets you simplify, accelerate, and scale the data pipeline needed for AI to gain deeper understanding in less time.”

“Unfortunately, many organizations still underestimate how much AI depends on an ability to marshal and manage vast quantities of data,” said Octavian Tanase, SVP of NetApp’s Data ONTAP operating system group, in a blog. “…Ontap AI lets you simplify, accelerate, and scale the data pipeline needed for AI to gain deeper understanding in less time.”

Ontap AI is driven by Nvidia’s DGX-1, launched in April 2017 and billed as the industry’s first AI supercomputer optimized for deep learning. The system, capable of 1 PFLOP of throughput, includes as many as 256B of GPU RAM and eight Nvidia Tesla V100s Tensor Core GPUs in a hybrid cube-mesh topology using NVIDIA NVLink.

“We’ve worked with NetApp to distill hard-won design insights and best practices into a replicable formula for rolling out an optimal architecture for AI and deep learning,” said Jim McHugh, DGX vice president and general manager at Nvidia. “It’s a formula that eliminates the guesswork of designing infrastructure, providing an optimal configuration of GPU computing, storage and networking.

In his blog, Tanase cited a hypothetical Ontap AI workload: better treatments for asthma, of which there are 25 million sufferers in the U.S. Adding sensors to an asthma inhaler opens a huge opportunity to correlate usage and location information among patients, said Tanase.

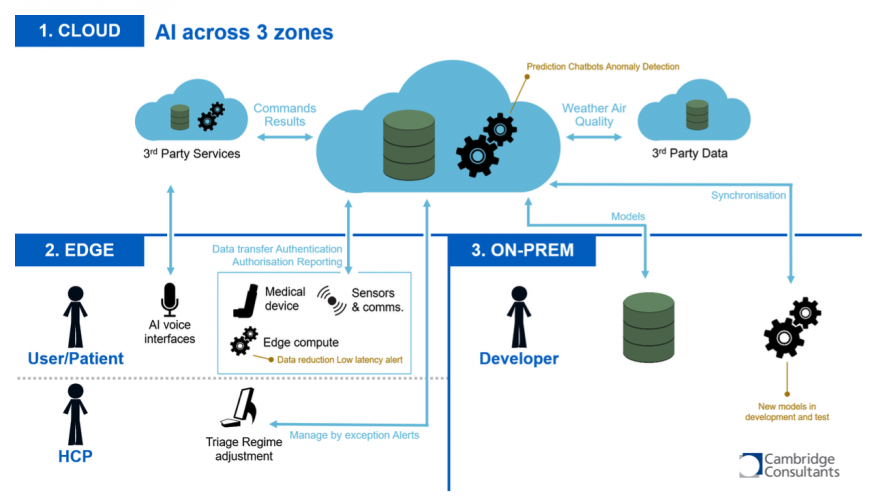

“Combining patient data with information on weather, air quality, pollen counts, and so on in real time can help patients avoid potential triggers, thereby minimizing risk and improving overall health,” he said. “…The smart inhaler example is a good model for thinking about the requirements of AI at scale. Data flows from thousands of devices at the edge. That data is combined with outside data sets during training in a core on-premises data center with GPU acceleration. The resulting inference model is deployed in the cloud to analyze new data points and identify and act on trigger events.”

But a bottleneck anywhere in the schema idles expensive infrastructure, increases costs and wastes the time of data scientists troubleshooting infrastructure, he said – and it puts patients at risk. The combination of the NetApp Data Fabric and Nvidia compute have been designed for non-disruptive data access at scale.

According to Tanase, Cambridge Consultants, a NetApp customer and AI partner, is exploring the potential of smart inhalers using the same technologies in Ontap AI:

“IAS is excited to be a launch partner for the Ontap AI proven architecture,” said Amy Rao, CEO of IAS, a VAR. “Moving large datasets from edge to core or core to cloud is becoming impractical, and customers are looking for ways to maximize data value independent of location. The Data Fabric—combined with NetApp cloud-connected all-flash technology and Nvidia GPU-assisted compute and all packaged in a pre-validated configuration—gives customers the choice, control, efficiency, and availability required for deep learning environments.”